CRO for Small Businesses: Optimizing Low Traffic Sites for Conversions

Low traffic doesn’t mean CRO is off the table. Learn the different types of validation you can use to optimize for conversions.

At The Good, we love to use the phrase, “Let’s test it.” There’s no sense in making assumptions or guessing what an audience wants. It’s smarter – and better for your budget – to run experiments in order to determine what actually boosts your conversion rate.

A/B testing is the standard experimentation methodology for digital marketing. It provides statistical significance and a high level of confidence. The challenge, however, is that it requires a decent amount of web traffic. If you don’t have enough traffic, outliers, and anomalies can influence your results, thereby obscuring the truth.

Fortunately, A/B testing isn’t the only conversion optimization process you can use to make smart decisions. There are other tools that will arm you with sound insight so you can improve your conversion goals

In this article, we discuss the different types of testing that business owners with smaller sites and low traffic can utilize to optimize for conversions. But first, it’s important to understand why you can still test even if your site doesn’t get many visitors.

The Confidence/Tolerance Relationship

Confidence is an important part of statistical analysis. It refers to the level of certainty we have that the results of a test accurately represent the real world, expressed as a percentage. Note: Confidence rate should not be confused with the success rate of the experiment.

If a test yields a confidence rate of 95%, we can be pretty sure that the results of the test align with the results in the real world. 50% confidence means the results could be accurate, but there’s an equal chance they could be totally off base.

Testing based on small sample sizes because you have low traffic tends to yield low confidence values. This means it’s hard to tell if the results of your test represent what will happen in the real world. This is true for macro conversions like sales or micro conversions like category browsing, filtering, or adds-to-cart.

But whenever we discuss confidence, we also have to think about tolerance.

Tolerance is your ability to deal with the consequences of being wrong. If you implement a change to your business based on the results of the test, but the results turned out to be inaccurate, could you deal with the consequences?

For instance, suppose you test product page variations. At the end of the test, page variation #1 produced more conversions. But after pushing all of your traffic to that variant, you learn that the test was inaccurate and conversions ultimately fell, which represents lost revenue.

In a case like this, the reduced conversions represent the cost of learning. Can you tolerate that cost? Or would it cause irreparable harm to your business?

Some organizations have very little tolerance. An aircraft manufacturer that is conducting stress tests needs 99.99% confidence that the results they’re getting from the test they’re running are correct. If a bolt breaks on their plane in the sky, it is a high-risk situation. So they have a very low tolerance for error.

In ecommerce, we have a higher tolerance for error. Some companies may find that 75% confidence is sufficient, while others might only need some confidence to move forward.

When you optimize conversions for a small site with low traffic, you’re simply reducing the confidence of your testing. But if your tolerance is high, confidence is less important.

CRO for small businesses is unique because you have a high tolerance for the consequences of a bad experiment. If you’re only getting three conversions per day, a 30% or 50% drop doesn’t really mean much. Your job, therefore, is to determine what your tolerance is, and based on that, decide what kind of validation methods meet your needs.

Rapid Testing: Validation Beyond A/B Testing

The confidence vs. tolerance balance begs the question: How do we test different tactics on a site without much traffic if we can’t use A/B testing?

In the absence of data to validate statistical significance, we have to lean into rapid testing. When it comes to CRO for small businesses, this is the bread and butter.

Rapid testing is the practice of getting quick answers from people who aren’t site users, but still match your customer profile. The goal is to get feedback quickly on isolated elements. This type of testing is fast, efficient, and doesn’t require a large user base.

That said, there are two downsides to rapid testing. First, testing quickly means you give up some statistical certainty. Second, you aren’t testing your actual customers, so results are less reliable (but not unreliable).

Nevertheless, when we talk about CRO for small businesses, rapid testing is your best option. Let’s look at the different types of rapid testing that help you optimize a website with low traffic.

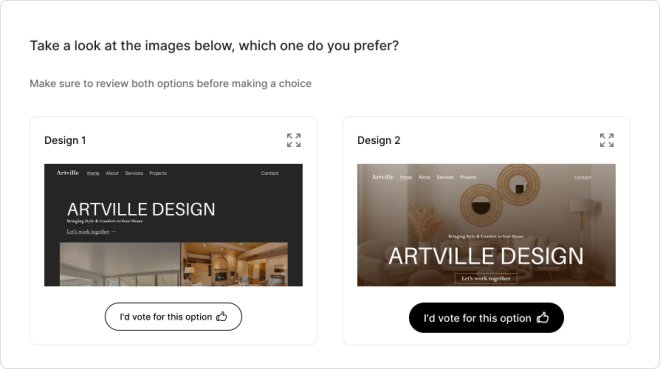

1. Preference testing

Preference testing is when you present two or more variants to users who match your target audience and ask for feedback on which variant is more likely to make a user perform a specific action or feel a certain way.

Source

For example, you might show three ad designs to a number of people who match your target audience and ask, “Which of these is more likely to make you click the blue button and why?”

One of the best use cases for preference testing is display ads. If a company has eight versions of an ad and wants to narrow it down to two or three to test in the market against each other, preference testing helps to quickly cut out the worst performers and provides a great gut check with an audience that matches some of the criteria of the test market audience.

Another good application for preference testing is any marketing asset with small amounts of copy. Whenever you ask people to evaluate multiple options, it’s important to keep the options simple so they can take it all in. This is especially true when you’re testing language.

For example, you might present test participants with three headlines and ask, “Which one makes you more interested in the product?” Here, the amount of information that they have to retain in order to make a decision is small. If you want to test a lot of copy, break it into smaller chunks and administer multiple tests.

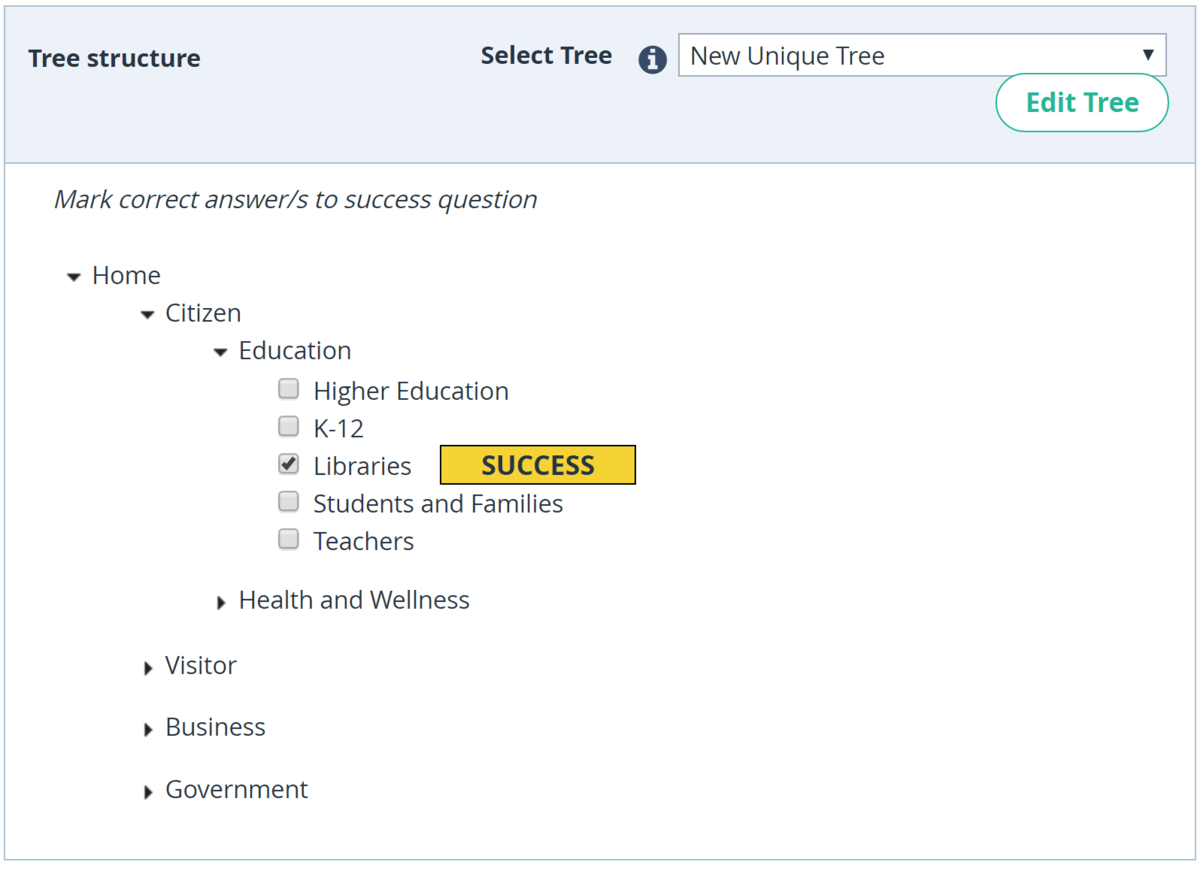

2. Tree testing

Tree testing is a usability testing method that helps evaluate the effectiveness of a website’s information architecture. It involves presenting users with a simplified, text-only version of the site or app’s hierarchical structure, often in the form of an accordion-shaped tree diagram, and asking them to complete specific tasks by navigating through the tree. It’s used to optimize site navigation, menus, and categories.

Source

The goal of tree testing is to assess whether users can easily find the information they are looking for and identify any issues or potential improvements in the organization of content. It allows you to measure things like how long it takes for someone to find something, and how likely they are to think they can discover something there.

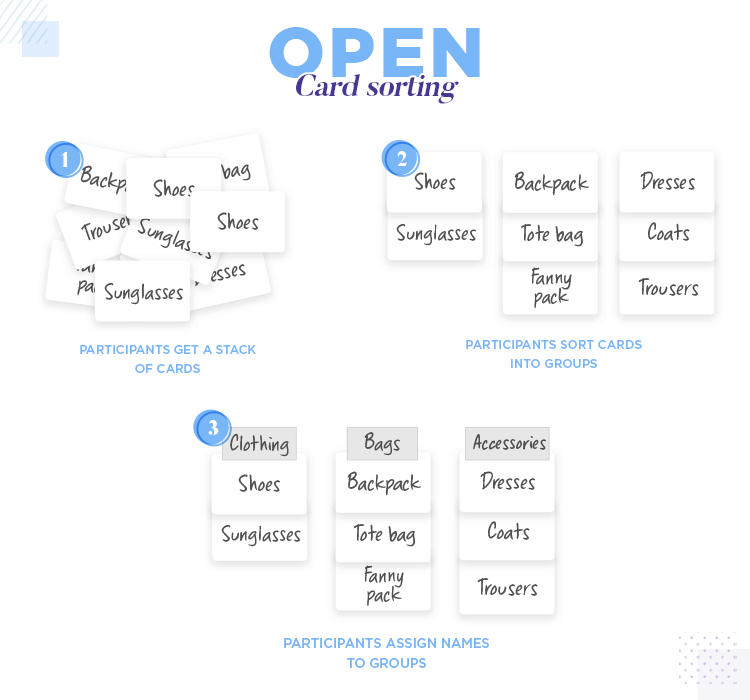

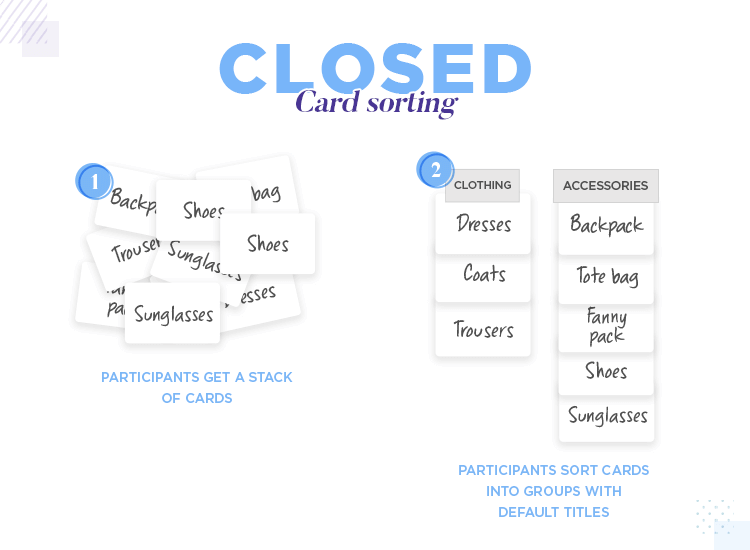

3. Card sorting

Card sorting is a generative type of research useful for earlier-stage work like product categorization and menu organization, which makes it especially applicable to CRO for small businesses.

In card sorting, participants are given a set of cards, each representing a piece of content or functionality, and asked to organize them into categories or groups based on their understanding or perception of how they relate to each other.

There are two main types of card sorting. In open card sorting, participants create their own categories or groups and name them based on their interpretations of the content or features.

In closed card sorting, participants are provided with predefined categories and asked to place the cards into the appropriate groups.

The results of card sorting exercises help designers and developers understand how users perceive the relationships between content or features, informing the design of navigation menus, categories, and overall information architecture to improve usability and user experience.

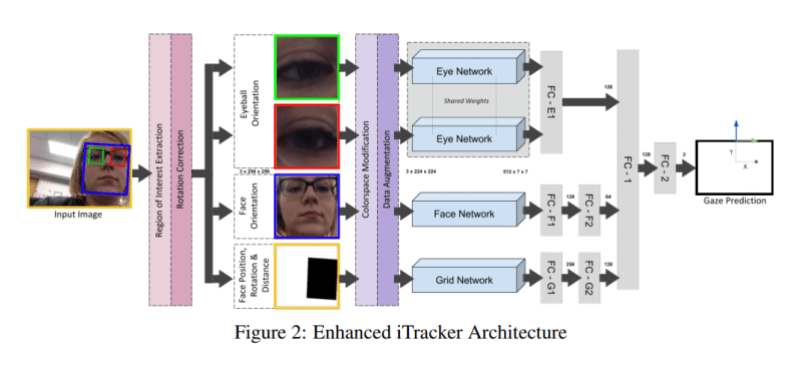

4. AI-based eye-tracking studies

Traditional eye-tracking studies involve tracking the gaze and movement of a user’s eyes as they interact with a website to understand how users consume information and identify areas that draw attention or cause confusion.

AI-powered eye-tracking studies replace the need for real people by using machine learning models trained on large datasets of human eye movements. These models simulate human eye-tracking behavior and then predict the likely gaze patterns and attention distribution of users when presented with a new website.

Source

By leveraging AI, you can gather valuable insights into user behavior and attention more quickly and cost-effectively. No traffic is necessary, which makes it especially powerful for CRO for small business. While AI eye tracking is not a perfect representation of how real people use your site, it’s a great way to evaluate which option from a group is more likely to get attention.

5. Recall testing

Recall testing is where you show users a design for a short period of time (usually five or 10 seconds) and ask what they remember seeing on the page. You would conclude the one they remember to be more memorable and eye-catching.

For example, to test an add-on in the cart, you can show one version of the cart page design to 50 users, and another design version to a separate group of 50, and the better ability to recall they saw an enticing add-on would be a good indicator of a better placement or a more eye-catching placement.

6. Click testing

Click testing is similar to heat testing, but instead of showing where users move their mouse, it shows you where they clicked when you ask them to perform a specific task. This can be especially helpful when testing complicated interfaces like product configurators.

When we worked with Fully, we tested wireframes of their product configurators. We used click testing to instruct users to “Show us where you would click on the page if you wanted to update the color of the metal base.”

We assumed the participants would click the word “frame” on the right-hand panel that had an accordion-style menu. Instead, they clicked on the image, assuming the configurator was interactive since the picture was taking up 80% of the screen. This taught us we either needed to make the image clickable or make using the menu more obvious.

7. Preference test with open-ended responses

This type of test is similar to a regular preference test, except that participants are asked open-ended questions after completing their prompts. They might be asked, “What did you like about this design and why?” or “Which one are you more likely to pick and why?”

In some cases, getting an open-ended response enables you to hear the why behind a user’s action, thus providing you with insight into how you’re making users feel along the journey.

Interested in learning the laws of optimization?

Opting In To Optimization is a set of principles that will help digital leaders capitalize on unprecedented market demand and build sustainable, thriving businesses.

Other Optimization Tools for Low Traffic Sites

While rapid testing is the best way to validate your optimizations if you lack traffic, you’ll need some tools to generate testing ideas and gather qualitative data. The following knowledge-building tactics will help you understand foundational elements of your website, like traffic sources and demographics.

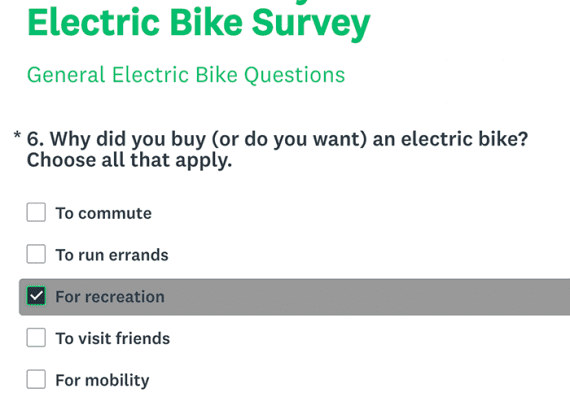

1. Surveys

Surveys are structured questionnaires designed to collect data from a specific population, providing valuable insights into attitudes, opinions, or experiences to inform decision-making or hypothesis testing.

People generally aren’t willing to complete long surveys, so ask the questions that are the most impactful to your business. In some cases, you may need to incentivize people to participate.

Source

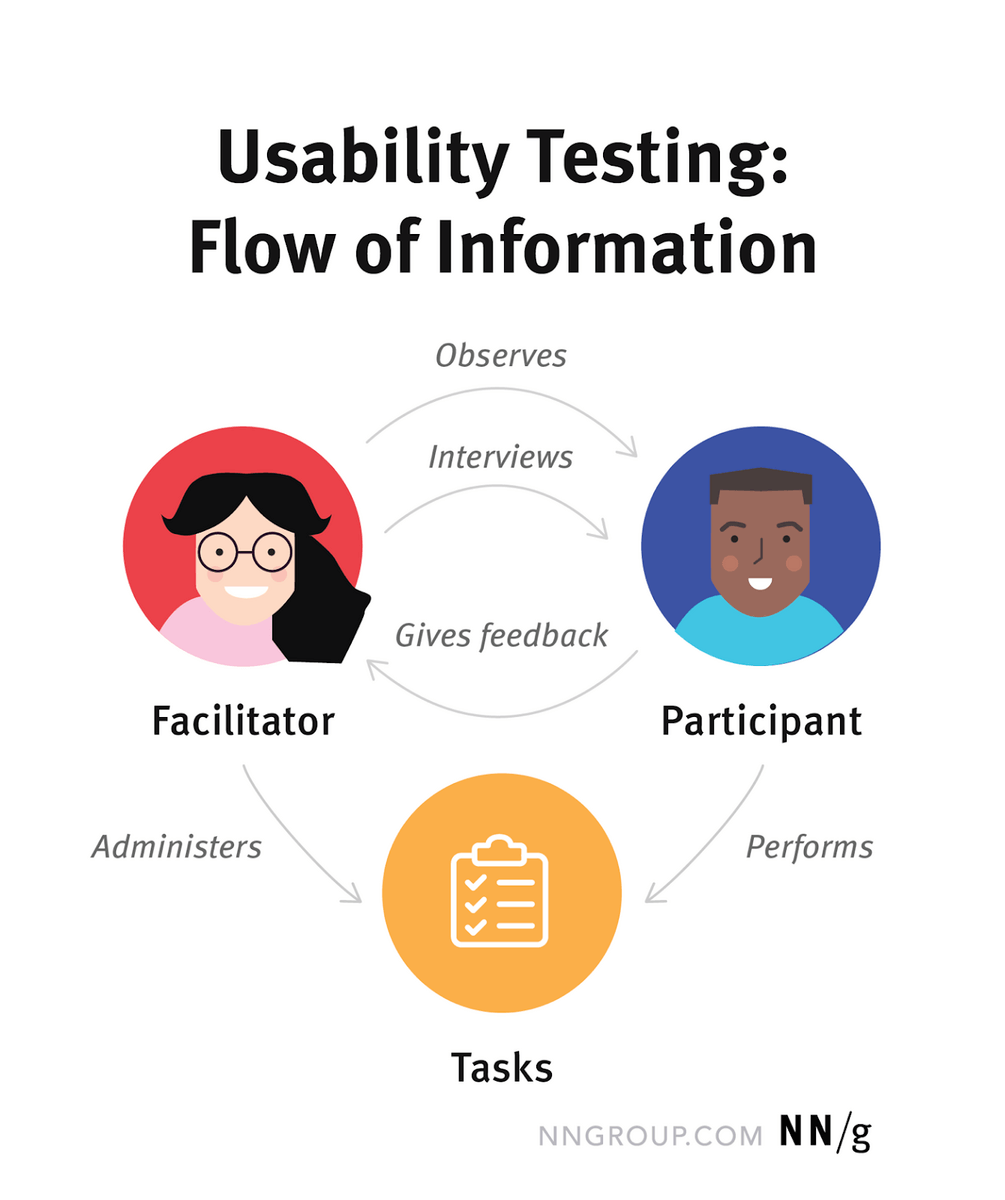

2. Usability testing

Usability testing is a research method that involves observing real internet users as they interact with a product or interface to evaluate its usability, functionality, and overall user experience, informing design improvements and enhancements.

A usability test requires a facilitator, participant, and a series of tasks for the participant to complete. The facilitator watches the participant complete the tasks or views a recording of the session later.

Source

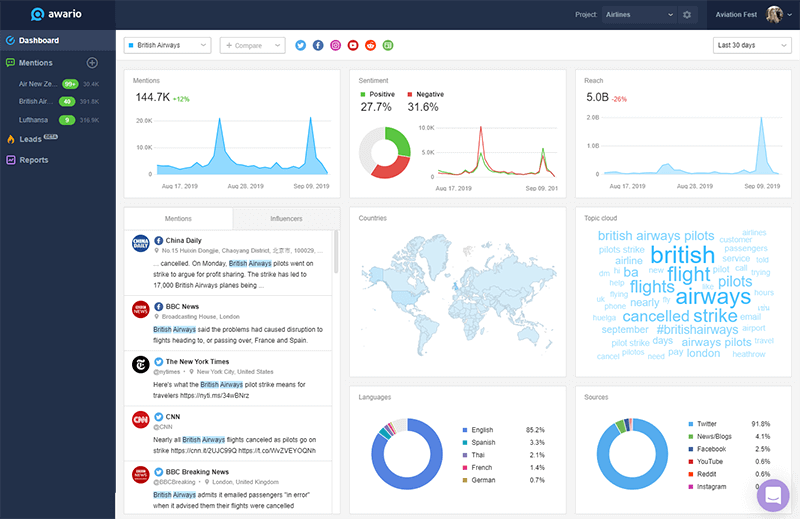

3. Reviews and social listening

Reviews and social listening involve monitoring and analyzing customer feedback and conversations on various platforms, such as review sites and social media, to gain insights into brand perception, customer satisfaction, and potential areas for improvement.

If you’re serious about social listening, it’s smart to use a dedicated app that aggregates all of the information on the web regarding your name, industry, demographic, and even relevant hashtags. Notice how this dashboard is tracking everything that has to do with British Airways.

Source

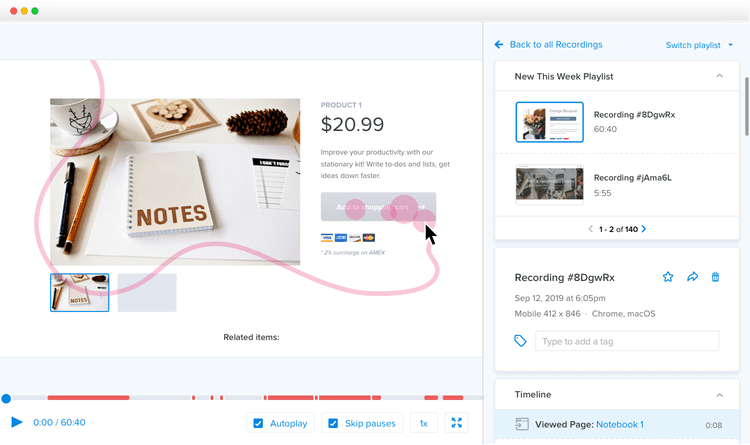

4. Session recording

Session recording is a digital analytics tool that captures and replays user interactions with a website or application. The primary goal of a session replay is to provide you with insights into user behavior and help to identify areas for improvement. With a tool like Hotjar, you can literally watch people use your site.

Source

5. Heat map analysis

Heat maps are data visualizations that use color gradients to represent the density, frequency, or intensity of values in a dataset, making it easier to identify patterns, trends, or outliers. It will show you the parts of your site your visitors find most relevant and eye-catching, and what they didn’t notice at all.

CRO for Small Business isn’t Impossible

While it’s true that conversion rate optimization is more reliable when you have a lot of traffic, that doesn’t mean it’s impossible if your site sees few visitors. You may have less confidence in your tests, but you also have a higher tolerance for being wrong.

That gives you a unique advantage when it comes to CRO for small business, as long as you’re willing to use some of the testing methodologies we explained above, instead of standard A/B testing in your conversion optimization process.

If you’re a small business, you’re the perfect size for a CRO Audit from UserInput by The Good. This audit taps into some of the tactics mentioned in this week’s article to provide a personalized action plan to help you design better shopping experiences and improve your most important metrics.

About the Author

Jon MacDonald

Jon MacDonald is founder and President of The Good, a digital experience optimization firm that has achieved results for some of the largest companies including Adobe, Nike, Xerox, Verizon, Intel and more. Jon regularly contributes to publications like Entrepreneur and Inc.