No Silver Bullets: Why a Structured Testing Process is the Best Way to Drive Sustained Growth

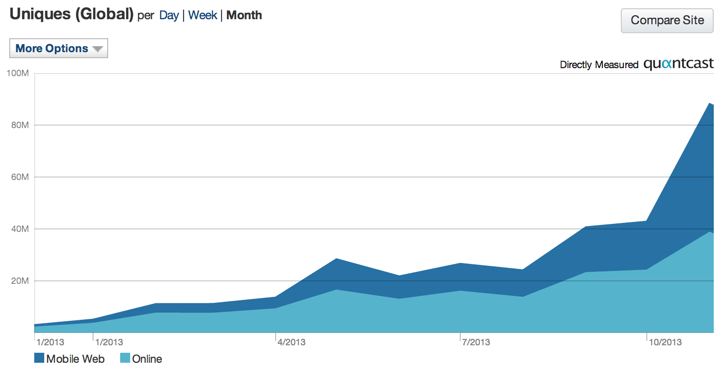

A structured testing process is key to long-term revenue gains. Like small retirement account deposits, consistent steady improvements in your website compound and lead to strong sustained growth.

We often treat A/B testing like miracle weight loss cures. We think that if we can discover the silver bullet, we’ll unlock crazy, stratospheric results. If we can find the right button color or opt-in field or images, we’ll tap into a goldmine of ecommerce revenue.

We search for massive overnight growth of viral proportions – the kind of growth that can instantly make us rich and famous, a legend amongst marketers.

In the back of our minds, we know that weight loss pills don’t work, but we don’t apply the same rigorous logic to A/B testing. We believe that if we can stumble upon the right “hack”, we can achieve meteoric growth overnight.

The true ticket to sustainable growth is making incremental improvements to site usability. Share on XSensational stories spread about how changing a single button on a website can generate $300 million in additional revenue for a website, and before long we’re all searching for the same kind of Holy Grail of hacks.

But the reality is, this kind of colossal growth is rare. Yes, occasionally companies unlock $300 million insights, but this is a highly unusual occurrence. The true ticket to long term sustainable growth is making incremental improvements that improve site usability for your target audience. This type of big growth is slow, steady, and persistent.

In the end, searching for the perfect growth-hack will take far more time and business resources than simply following a structured testing process that makes iterative data validated improvements. Often, ecommerce managers spend so much time guessing what might work that they end up making little or no progress.

Plus, they end up jumping too quickly from solution to solution without spending the time necessary to evaluate the results. Instead of digging through the data to find out why something did or didn’t work, they simply move to the next test, hoping that it will be the golden ticket.

The truth is, intuition and gut feelings are rarely correct. To truly achieve meaningful growth, you need a structured testing process. It’s not enough to make changes based on your gut, especially when the tools and resources are available today that can help you validate whether your gut is right or wrong.

To truly achieve meaningful growth, you need a structured testing process. Share on XJustin Rondeau writes about the problem of simply adding social proof to your site because your gut tells you it’s right:

Normally we see companies just add social proof without testing it because virtually every blog post and ‘social media expert’ has told them it will help conversions. Thankfully the team at this site tested this before implementation, or they would have missed out on a lot of opt-ins – more than double the opt-ins in fact.

Don’t get me wrong, there is a ton of value in social proof. For one, it is great at reducing a visitor’s anxiety and can give your brand a sense of authority. The trick is finding out where it is best to publish your social stats and what exactly is worth sharing. Should you post newsletter subscribers, Facebook likes, awards, or all of the above? Simply put: test it out. Never add something blindly; you don’t know how it will impact the bottom line.

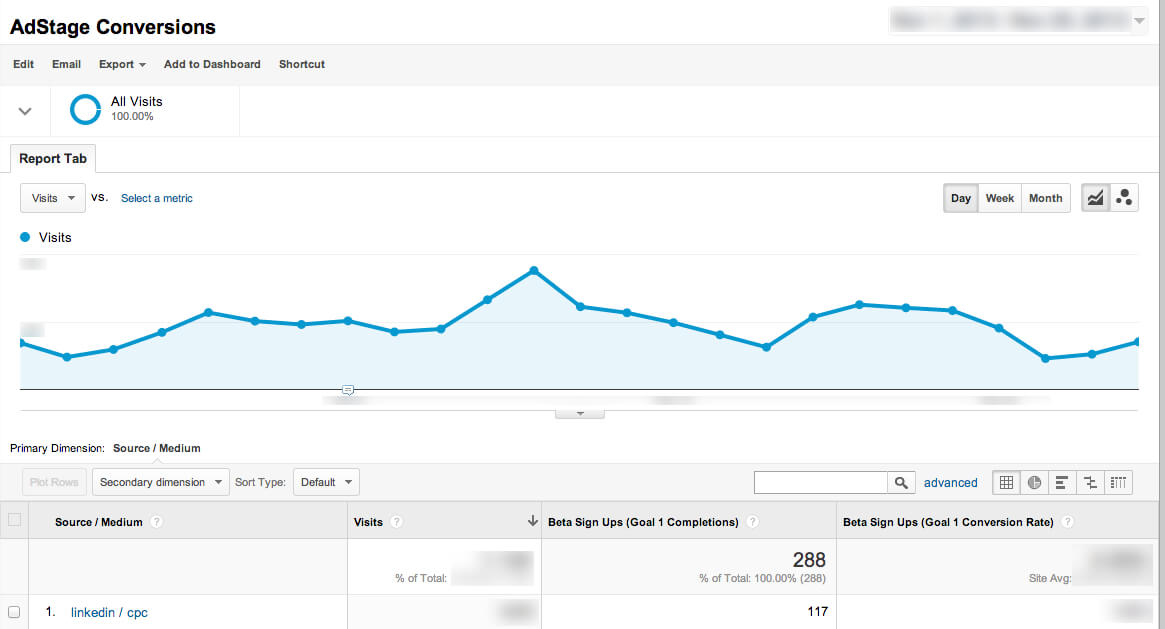

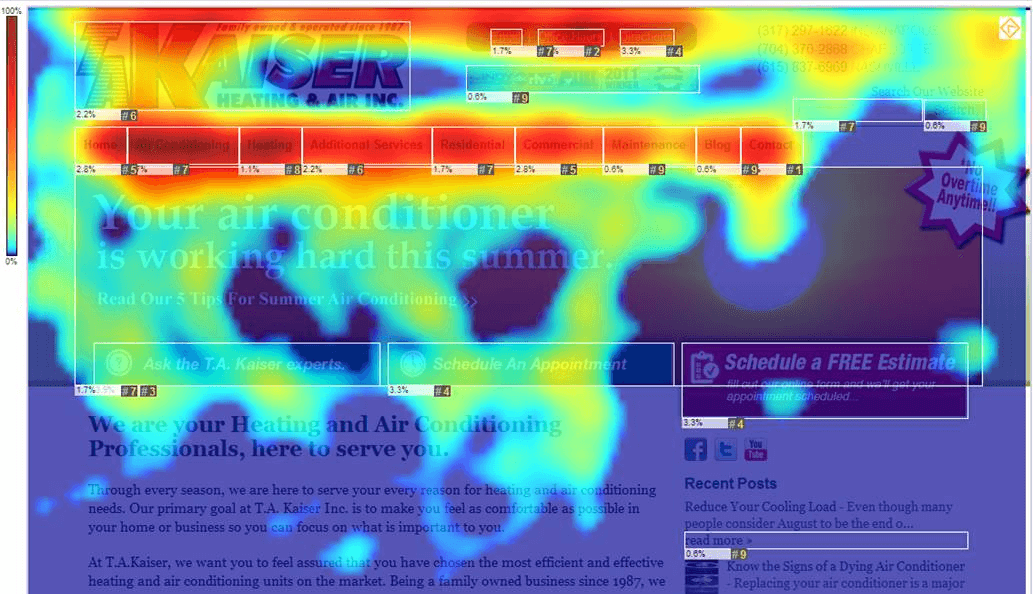

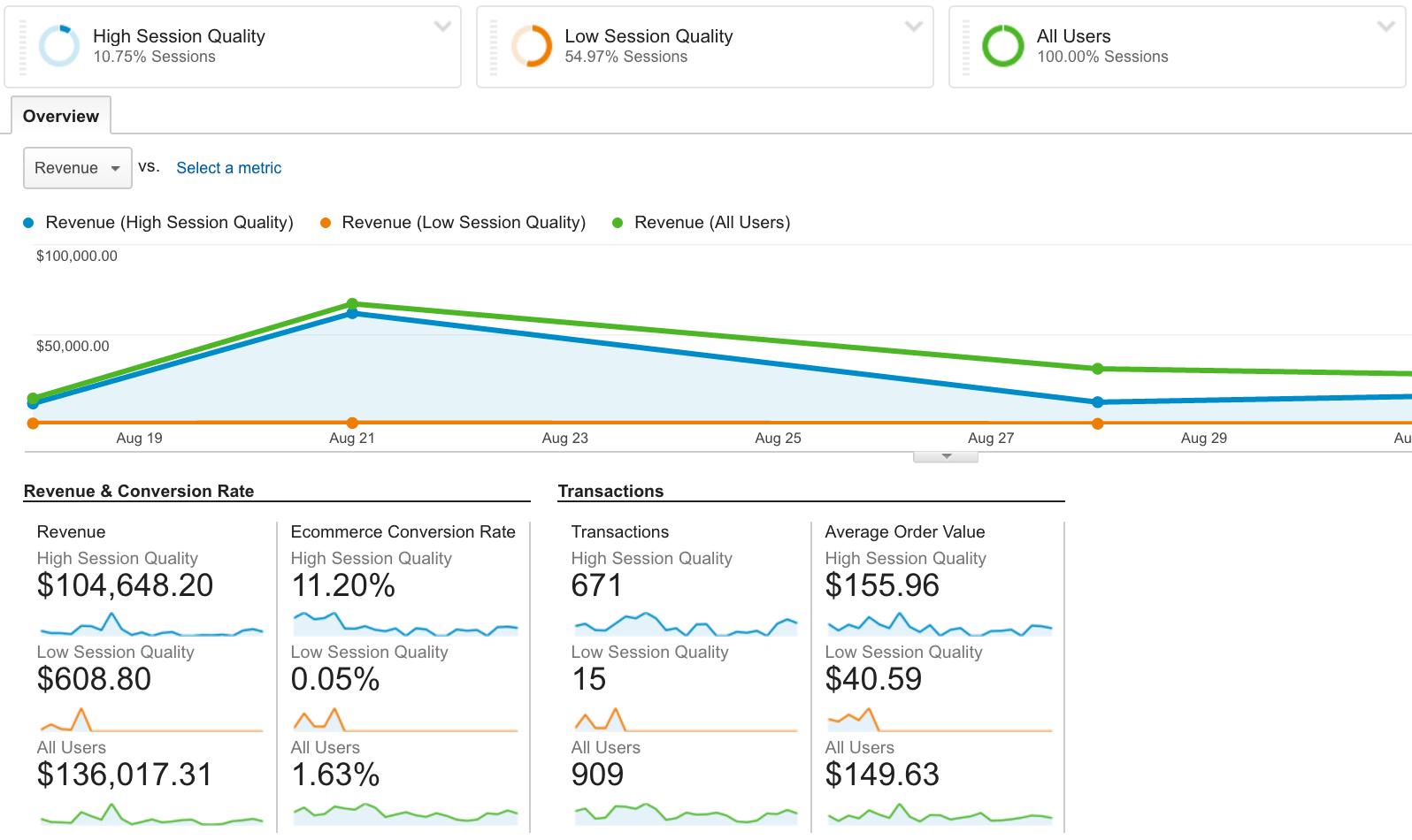

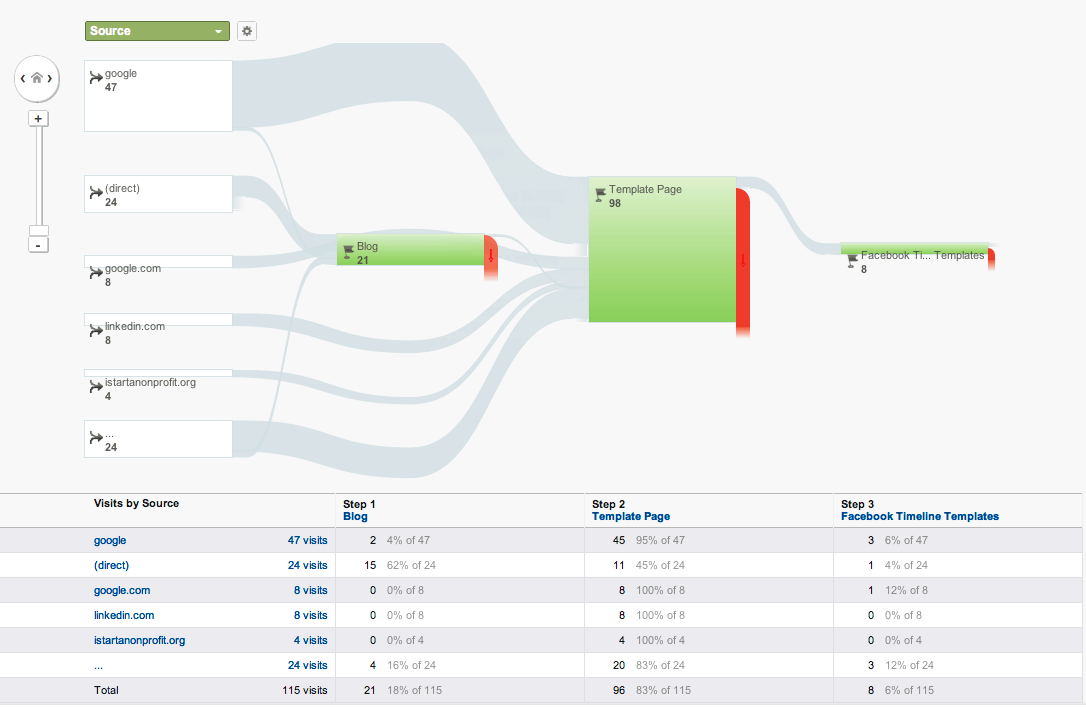

You can learn directly from your consumers through analytics, heatmaps, user testing, and not just what actions they are taking on your site, but why they are taking them. This gives you far more insight into your customers and how they prefer to use your site.

Why should the testing be structured? The primary reason for this is so you don’t waste your testing insights. A structured process will allow you adjust well on unsuccessful tests as well as building upon successful tests. Take a look at our Xerox case study for example.

If you don’t plan and record your insights, you’re setting yourself up to repeat failed tests, test the wrong things, or worse. In addition, sometimes it will be necessary to build experiments upon insights learned in a previous insight and without a structured process, these sorts of tests can be more difficult.

A/B testing is much closer to a rigorous workout routine than a miracle weight loss pill. If you randomly jump from exercise to exercise, you’ll make very little progress. On the other hand, by repeatedly performing the same, small actions again and again, incremental gains are made, eventually resulting in big results.

Why Are We So Obsessed With Finding Silver Bullets?

Massive, revenue boosting, silver bullet discoveries are every ecommerce marketers dream. If only ecommerce managers could find that one little tweak that would cause their revenue and conversions to blow through the roof. And it’s not like marketing websites are helping.

In a story about incredible gains from A/B testing, Hubspot tells the story of EA Sports and one particular game they were selling:

One variation removed the promotional offer from the page altogether. The test lead to some very surprising results: The variation with no offer messaging whatsoever drove 43.4% more purchases. Turns out people really just wanted to buy the game — no extra incentive necessary.

That one miniscule change resulted in millions of dollars in extra sales. You’re tempted to think that you can get those kinds of gains as well. This is like trying to get rich by winning the lottery. You may, by some astronomic strike of luck, pick the winning numbers, but the odds are much higher that you’ll waste loads of money and never win anything.

Bottom line: random chance doesn’t usually lead to success.

Lars Lofgren puts it like this:

…randomly picking elements to test produces lackluster results. The needle doesn’t move at all.

Right now, there are several big-win tests you could run that would make a HUGE difference to the growth of your company. Like 5-20% in customer growth kind of huge. These optimizations are just sitting there, waiting for you to start running A/B tests.

Finding big-wins doesn’t happen by accident.

Here’s the thing: if you A/B test all sorts of random stuff, you’ll never find these big-wins.

To find them, we’ll need a completely different process for deciding which tests to run. There’s a time and a place for testing everything we can think of but the big-wins require a completely different approach to testing.

Trying to unlock growth hacks seems so appealing, but it’s an incredibly short term mindset. It’s looking for the big strike so that you don’t have to put in the work of incremental, structured A/B testing.

True, big gains in A/B testing is a long game, involving multiple tests, multiple hypotheses, and numerous iterations. You have to dive deep into the analytics and look for places where you’re leaving revenue on the table. You need to do user testing to find out where your gut feelings are actually true.

This is not a short, easy process, but it’s the right way to do A/B testing. It’s a way of guaranteeing success rather than gambling with random website changes.

Elena Dobre phrases it like this:

A/B testing should always be a part of a well-structured plan to improve conversion rate. Otherwise, doing A/B testing without having in mind the online marketing and conversion goals will lead to ineffective results. Usually, a conversion optimization beginner has to run eight tests to have a successful one.

Declare an A/B test successful only when it drives to a significant increase in conversion.

To cut corners, a structured approach to A/B testing needs to be a continuous process of improving the website’s performance. Consistency is the key to having a constant positive impact on the key performance indicators.

Consistency is the key to positive impact. You need to have a marathon mentality rather than the mindset of a 100 meter sprinter.

Of course, all this raises the question: but why?

You know that structured A/B testing is more valuable than random testing, but you’re incredibly busy as a marketer.

Random A/B tests still can provide some benefit, right?

Why Is Structured Testing A Better Alternative?

It’s true that random testing can occasionally generate positive results, in the same way that jogging once per month is more healthy than always sitting on the couch.

But random tests can also have significantly negative impacts, both on your customers and your overall revenue.

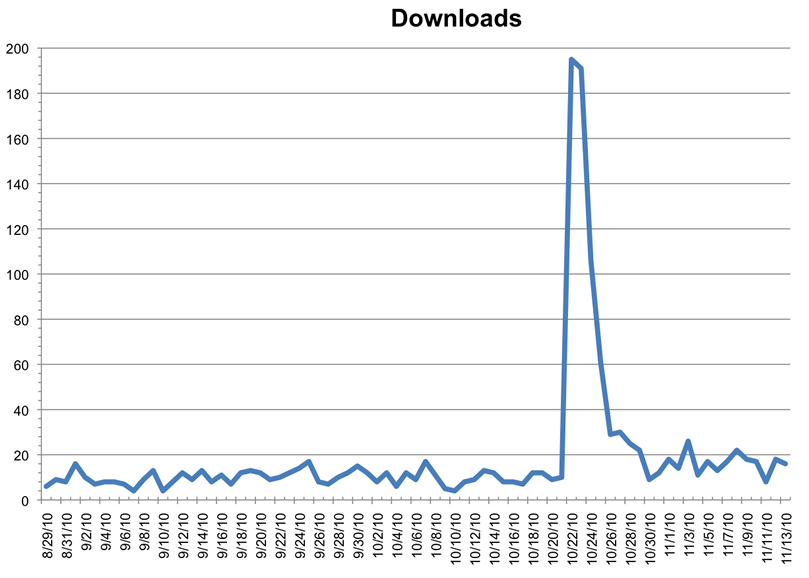

Brian Campbell of Lucidchart tells how a seemingly successful A/B actually caused a huge problem for them:

Although we had increased the aggregate number of users creating documents, the percent of new registrations that created a document had dropped. More of these new users bounced before creating a document. However, by increasing the top of the funnel, the number of users engaging with the product increased in aggregate. If we had better communicated this impact we may have avoided the panic that came with having an important metric drop so suddenly. Going forward we make a point to track both the key results of the test, as well as other important metrics.

This is the danger of unstructured A/B testing. It can create significant unintended consequences. The change may be something your target audience dislikes, causing customers and revenue to drop off. Or the change may not have been closely enough tied to your overall objectives, resulting in unexpected side effects like the ones above.

Structured testing helps you avoid unforeseen mistakes.

Structured testing also helps you find changes that your audience didn’t even know they wanted.

Steve Jobs famously said, “A lot of times, people don’t know what they want until you show it to them.” In other words, customers are often unable to articulate what they really want. Structured A/B testing allows you to uncover and tap into those desires, which can produce a serious jump in your overall revenue.

For example, if you have two similar pages and one is drastically outperforming the other, there’s a reason, and revenue in that reason. Your customers probably won’t be able to articulate why they prefer one page. Maybe it’s the layout or maybe the copy better resonates with their pain points. Maybe it really is something as simple as the text on a single button.

Structured testing allows you to uncover that reason. It allows you to methodically test one hypothesis at a time until you discover the reason(s) one page is outperforming another. It allows you to unlock customers desires and preferences that your customers didn’t even know they had.

Finally, systematic A/B testing cuts through competing stakeholder options. Everyone in your company has an opinion. Designers want their pages to look a certain way, copywriters like some sentences and not others, and backend personnel want to keep your site running smoothly.

Introducing changes often rubs people the wrong way, especially if they’ve invested hours in crafting an image or paragraph or process.

Here’s where A/B testing comes to the rescue. You can argue aesthetics and performance all you want, but you can’t argue with hard data. Revenue is always the bottom line. A page may not look as pretty, but if generates more revenue it takes the day. A certain phrase may not sound as smooth, but if it bumps up conversions it wins.

Data always cuts through politicking. It allows you stay focused on your end goal rather than getting mired in endless unnecessary debates.

What Does Structure Add To Testing?

Trying to build anything meaningful without a structure and plan results in slapdash results. If you try to erect a building without a blueprint, the foundation will be crooked, you’ll do things out of order, and the roof will leak.

The same principle applies to A/B testing. Structure allows you to do the right things in the right order at the right time. It gives you a step-by-step plan for achieving the most gains.

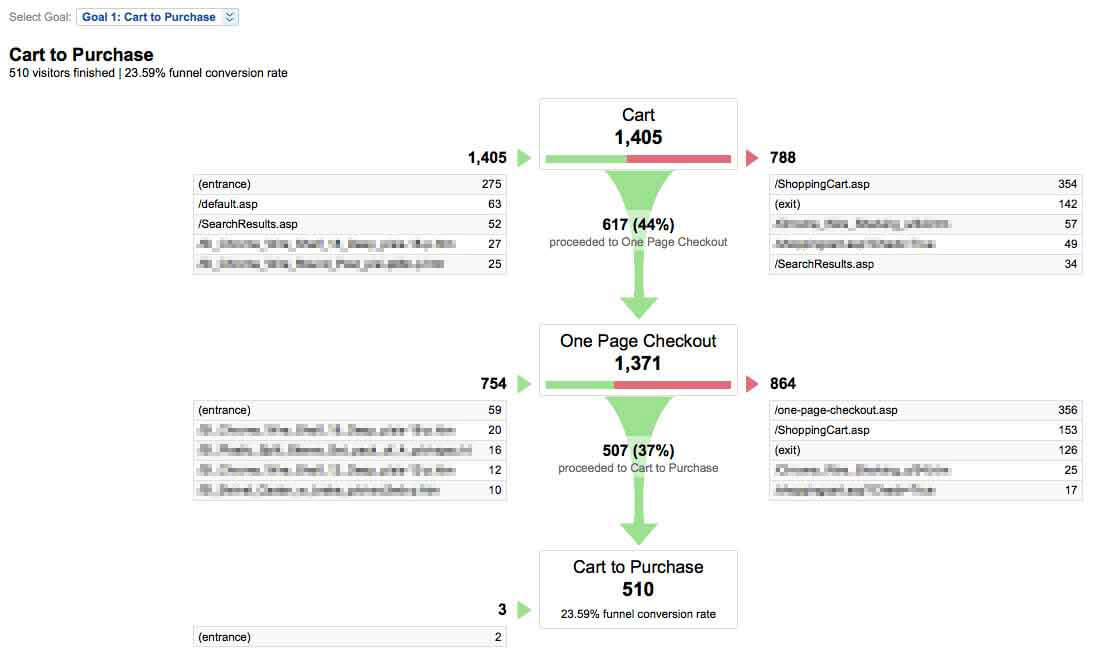

The reality is most ecommerce companies have several primary, large roadblocks preventing them from success. These roadblocks need to be cleared before the minor ones are addressed. Without structured testing, it’s easy to be distracted by minor issues and not address the biggest problems.

When you have thoroughly analyzed your data, studied heat maps, explored user testing, and conducted surveys, you have a good feel for what problems matter most to your company. Then you can systematically and iteratively create and test hypotheses to eliminate the big problems first.

You should only move to smaller issues after you’ve eliminated the big.

Elena Dobre suggests a structure similar to this:

Once you have analyzed what is happening on your website and defined your target metrics and found out which pages are under performing, it’s time to select the hypotheses based on the following three criteria:

Potential – rank the most important pages – homepage, product page, category page 1, cart page, etc or landing page 1, landing page 2, etc, based on how poorly they perform in terms of conversion rates. Start with the pages with the smallest Conversion Rate values until you reach the end of the most important webpages that you will need to optimize.

Importance – take every page that you have included in the list above and complete it with traffic volume data. For example, if the most underperforming page is “category page 1”, you need to find out if it has a high volume traffic which costs you enough money to consider optimizing it. On the other hand, if this page is receiving a small traffic volume that doesn’t generate sales (remember the 20-80 rule of the most valuable traffic segments for your business), then eliminate it from the list.

Ease – the third condition in prioritizing hypotheses is the ease of implementing the test. If it takes too much time, money due to technical problems or other external factors that you cannot control, then give up the A/B testing hypothesis.

Structured testing also allows you to avoid redundancy. If you don’t have a set process for testing, evaluating the insights, and then retesting, you’ll have significant overlap in your tests. You’ll fail to address all the problems identified in each test, miss out on key insights, and end up testing the same elements again and again.

When you identify a problem, it’s tempting to simply slap a fix on it and think you’ve totally solved it. This solution often only fixes the problem on the surface, neglecting deeper issues and missing out on big gains available. A structured process enables you to systematically work through each problem, thoroughly addressing it on every level.

Finally, systematic testing keeps on you track in regards to time management. There are times when tests can’t be run simultaneously and depend on each other. Tests need to run for some time before reaching statistical significance, and the temptation is to either try to move too quickly through the tests or to start running tests simultaneously without waiting for the full results.

Speaking of the need for statistical significance, Tom Ewer writes:

You might think to yourself, Hey, this has been running for a while now, and I think I’ve received enough visitors to call it – let’s go with choice number one! – and why not? After all, the numbers back you up.

The issue here, however, would be that you failed to consider the ‘chance factor’.

What if you ran the same test at another time and the results were significantly different? Or if you decided to keep the test running for a longer period, and the numbers began to swing in the opposite direction with a considerably larger sample pool? Chance plays a role in every A/B test…

A thorough A/B testing process keeps you on track in regards to time management and ensuring your tests are all statistically valid. It forces you to follow a proven process for testing interdependent processes and keeps you from moving forward too quickly.

Conclusion

There are no silver bullets or magic cures when it comes to sustained growth. Growth emerges from a systematic, sustained process. No viral hits, very rare $300 million insights.

But here’s the good news: growth is achievable. It’s not complicated or particularly complex. There are three simple requirements:

Pursue objective improvements. You’re not looking for the random home run or lottery strike. You’re pursuing objective, data-based, process-driven improvements. This isn’t about gut feelings or intuition. There are clear improvements that can be made.

Pursue a methodical process. Instead of manically chasing twenty rabbits, you’re doggedly pursuing single objectives through a methodical process. This is a methodical process that requires persistence and a commitment to the long game.

Pursue sustainable growth. The temptation with A/B testing is to shoot for the moon – to try to find that one growth hack that is going to revolutionize everything. But you won’t follow that. You’re after a sustainable growth that will eventually lead to big gains.

Thomas Edison said, “The reason a lot of people do not recognize opportunity is because it usually goes around wearing overalls looking like hard work.”

There are many opportunities for growth hidden in your website, waiting to be unlocked. But you have to be willing to put on the overalls and do the hard work.

—

Read our case studies to learn how The Good has used a structured testing process to drive sustainable ecommerce growth for leading brands.

Explore our services to learn how The Good can help drive sustainable compound ecommerce growth for your brand.