How to Approach A/B Test Design for the Clearest Results

What's the optimal approach to running an A/B testing program on your website? This Insight looks at how you should approach experimentation so you can collect the clearest and most impactful results.

Relying on intuition to make major changes to a website is a guessing game that often leads to decreased conversion rates and a poor user experience.

Rather than relying on guesses or assumptions to make decisions regarding your website’s UX design, you’re much better off running an A/B test.

Here’s the thing: when first beginning a testing program, there’s often a desire to run large, site-wide A/B tests to maximize the efficiency and ROI of experimentation. But, designing tests that modify multiple elements (variables) can lead to convoluted results that don’t offer clear insight into why the changes are impactful.

In this Insight, we’ll be looking at how to effectively approach A/B and multivariate test design to deliver the most impactful results.

Here’s what we’ll be covering:

- The common misconception about A/B and multivariate testing

- Recommendations for getting the best testing results

The Biggest Misconception About A/B Testing

Here’s the problem: When brands first start A/B testing, it’s natural to have high expectations about website changes that will drastically improve conversion rates, but that’s simply not the case.

Experimentation is an iterative process that always begins with a period of high value exploratory testing that furthers our understanding of how users interact with the site and what site elements are most impactful during the purchase journey.

These tests are often targeted to pieces of the site that get the most engagement, so they’re still impactful changes, even if they’re smaller. From there, we develop more specific tests for a website based on the data that’s been aggregated from user research and insights from experiments.

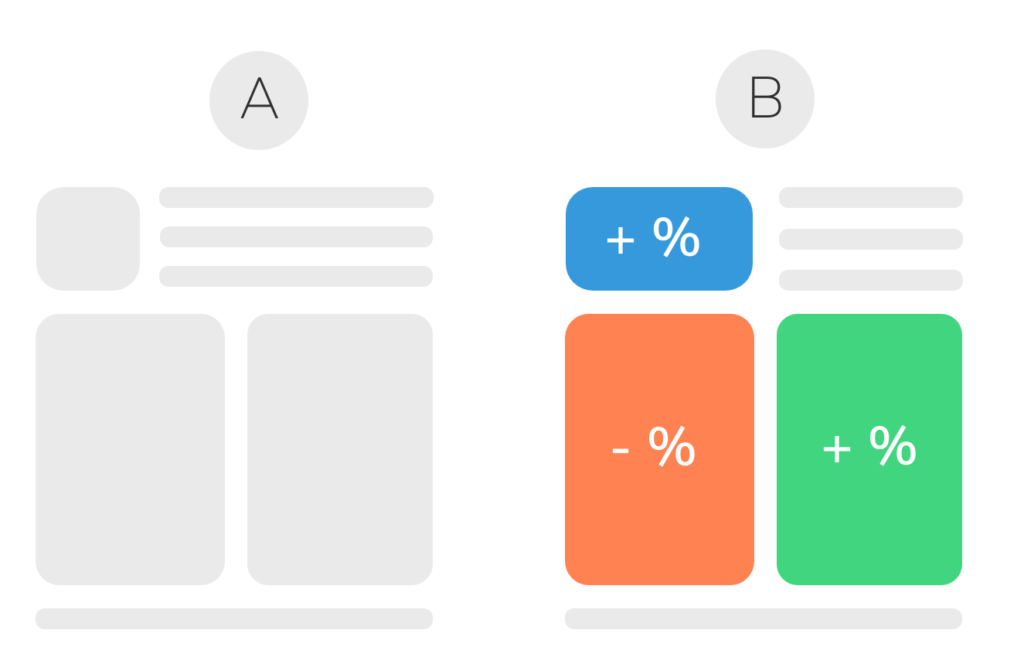

The problem with testing multiple variables in a single A/B test is that it’s more difficult to isolate the impact that each variable had on the collective results of the test.

Let’s say you decide to run an A/B test on your homepage. You leave variation A as your control, but variation B includes three separate variables, each variable testing a different element of your homepage (hero image, menu order, button color, etc). If variation B proves to be the winning test, there’s no clear way for you to tell which variable actually caused it to win.

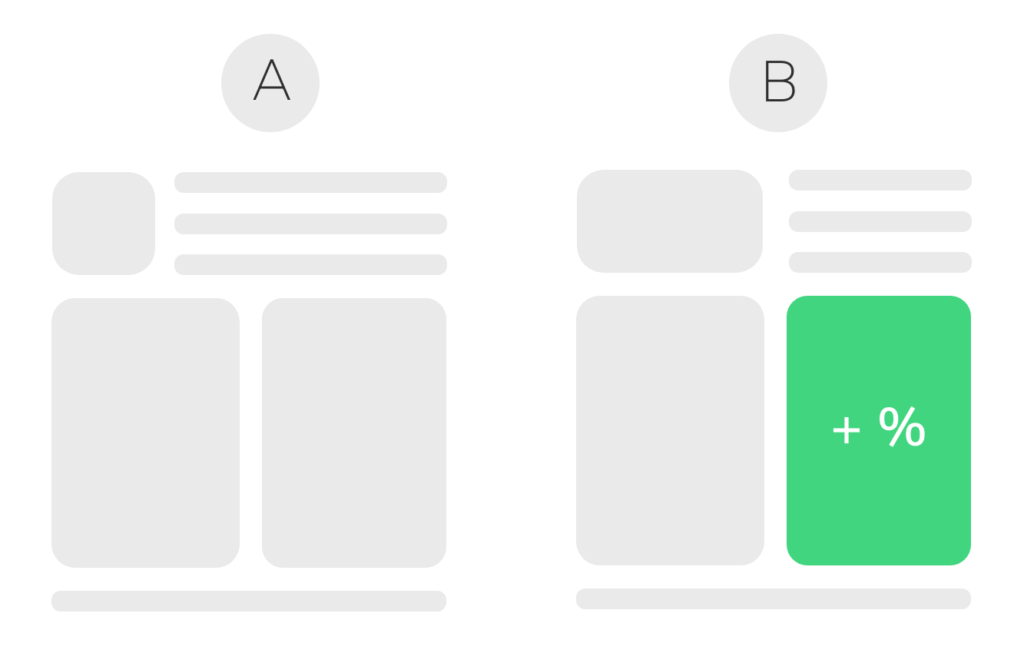

If you were to isolate the three variables and run separate A/B tests for each, you’ll also isolate the impact that each unique variable had on your homepage. Using this approach, you can be certain that all the changes you eventually apply to your live site will have a positive impact.

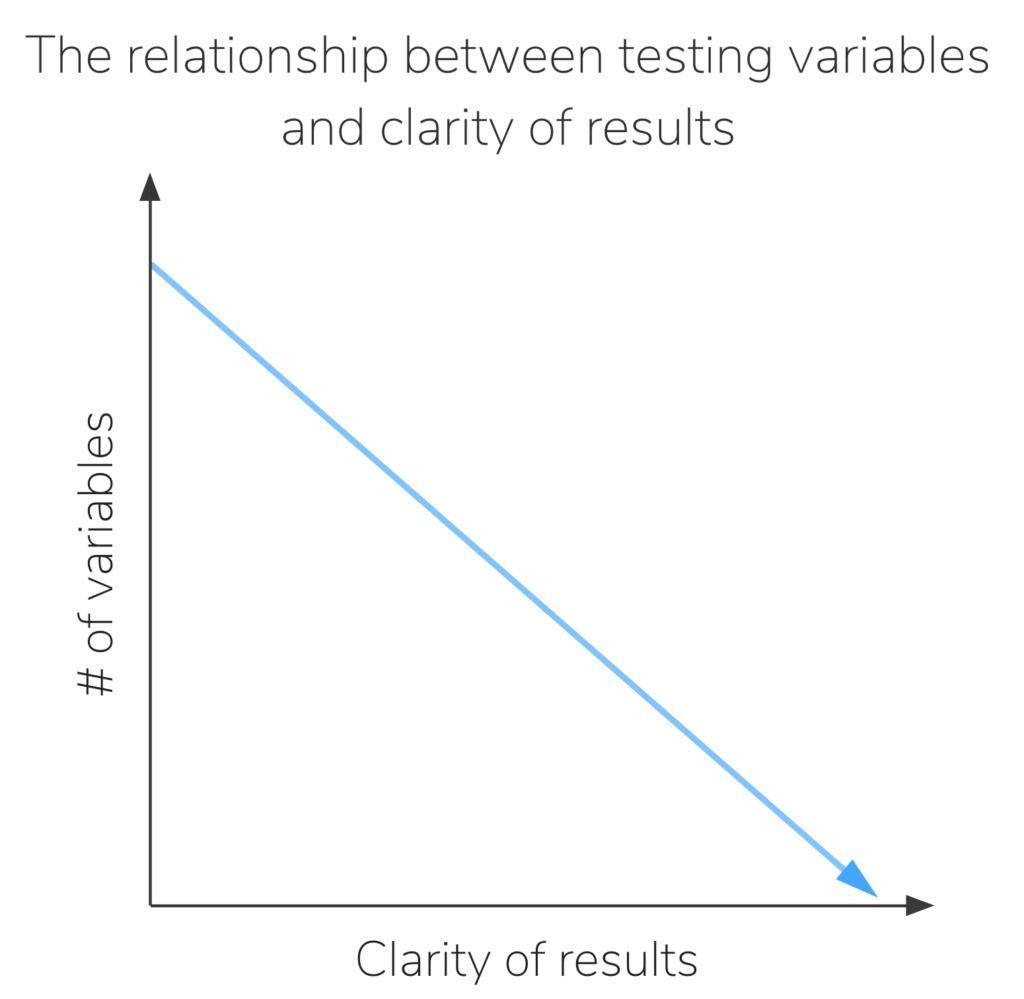

As testing variables decrease, the clarity of your testing results will inevitably increase. The model below illustrates how introducing fewer variables in an A/B test allows for a clearer interpretation of results. The less variables we introduce into a test, the more clearly we’re able to interpret the results.

Understanding the intricacies of individual user interactions will open up a clearer path for you to move down when designing more expansive sitewide tests later on. The initial round of exploratory A/B testing lays the foundation for what we can focus on throughout the course of the engagement. If too many variables are introduced, it’s difficult to gain clear insight about how user behavior was affected by the changes in the test.

Note: This isn’t to say you can’t get meaningful results from your first round of tests, but it’s more likely that you’ll need to wait until you have a better understanding of user behavior on your site before you’re able to design a winning test.

Another important distinction to acknowledge is that it’s very possible to manipulate many variables in an A/B test and still get a clear understanding what impacts it has on user behavior. Custom goals in Google Analytics or events in Google Tag Manager can be set up and monitored to examine micro-interactions and conversions, but this can be a time intensive effort that should be done with a web developer.

Recommendations for getting the best testing results

If you’re managing an ecommerce website that has less than 10,000 unique sessions per month, it’s very difficult to produce accurate results from testing multiple variables at the same time. Beginning a testing program off with smaller, exploratory A/B tests will help your team better understand the behaviors of your target audience, and will help inform future tests.

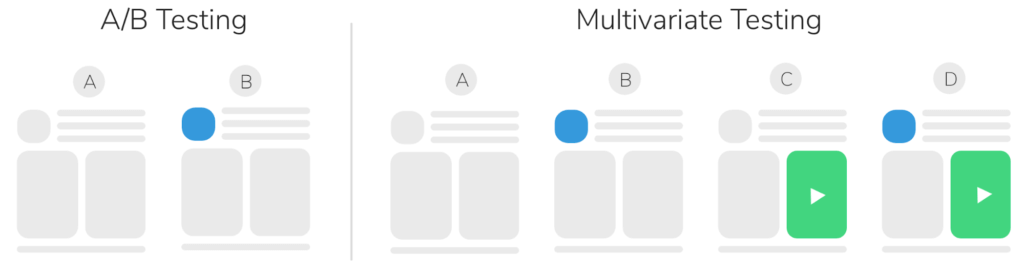

For websites that garner over 10,000 unique sessions per month, this opens up the possibility to begin conducting multivariate tests. Multivariate testing utilizes the same core concept as A/B testing, but it involves testing a higher number of variables, and will often reveal more information about how these variables interact with each other. Each change, and combination of changes to a site, is tested against the control to get a true understanding of what works, and why.

The image below illustrates how a multivariate test can incorporate several testing variables without compromising the clarity of the results.

If you have sufficient traffic, running a multivariate test can be a great way to experiment with multiple elements of a page simultaneously without compromising the clarity of your results. Just keep in mind that MVTs often require more time to build, and may take longer to reach statistical significance than a standard A/B test.

Note: Multivariate tests are certainly still an option for smaller ecommerce sites, but getting results turned around in a reasonable time frame will prove much more challenging. Reaching statistical significance could potentially take many weeks (if not months), which is why we find that running multiple smaller tests simultaneously will give you better results in a shorter amount of time.

It’s Time to Get Testing

The idea of experimenting with dozens of different elements on your website at one time may be tempting, but it’ll likely compromise the results of your test. Start small, explore the behavior of your audience and how they interact with your website, then move into larger more adventurous tests once you build a better profile of your audience’s behaviors.

If your website doesn’t have the traffic to support a multivariate test, consider running a variety of smaller concurrent A/B tests until you reach the level of traffic required to run a larger test.

If you’re interested in conducting a testing program but don’t have the resources to manage it on your own, it may be time to consider working with a Conversion Rate Optimization agency. At The Good, we focus on optimizing website experiences for brands of all sizes (SMB to enterprise). Sign-up for a free landing page teardown where we’ll look at one key page on your website and provide actionable feedback around how you can begin to improve it.

About the Author

Ethan Cotton

Ethan has a background in academic marketing research, focusing on how the communication of product attributes impacts purchase motivations.