How to Validate Website Design Changes: A Decision Framework

Incorporating a framework to decide how you validate design decisions can get you 50% better win rates on A/B tests and faster time-to-market on improvements.

How do you know if that new homepage design, updated pricing page, or streamlined onboarding flow will actually improve conversions before you build it?

The default answer has been A/B testing. But while A/B testing remains the gold standard for high-stakes decisions, it’s not always the right tool for every design change. Many teams have fallen into the trap of either testing everything (creating bottlenecks and slowing innovation) or testing nothing (making changes based purely on intuition).

There’s a better way. By understanding when different validation* methods are most appropriate, SaaS teams can make faster, more confident design decisions while maintaining the rigor needed for their most critical changes.

*Note: We know validation is a bad word in the research community because it implies “proving you’re right,” but we feel it’s easier to read and more quickly comprehensible for those not in research disciplines. We’re using “validation” in this article, but “evaluation” or “confirm or disconfirm” would be more acute in other settings.

The real cost of a bad experimentation strategy

When teams lack a clear strategy for validating decisions, they create what researcher Jared Spool calls “Experience Rot” – the gradual deterioration of user experience quality from moving too slowly or focusing solely on economic outcomes rather than user needs.

The costs manifest in several ways:

- Opportunity cost: Market opportunities disappear while waiting for test results that may not even be necessary

- Resource waste: Development time gets tied up in prolonged testing initiatives for low-risk changes

- Analysis paralysis: Teams debate endlessly about what to test next instead of making decisions

- Competitive disadvantage: Competitors gain ground while you’re stuck in lengthy validation cycles

The key is matching your experimentation method to the decision you’re making, rather than forcing every design change through the same validation process.

Enjoying this article?

Subscribe to our newsletter, Good Question, to get insights like this sent straight to your inbox.

A framework for design validation decisions

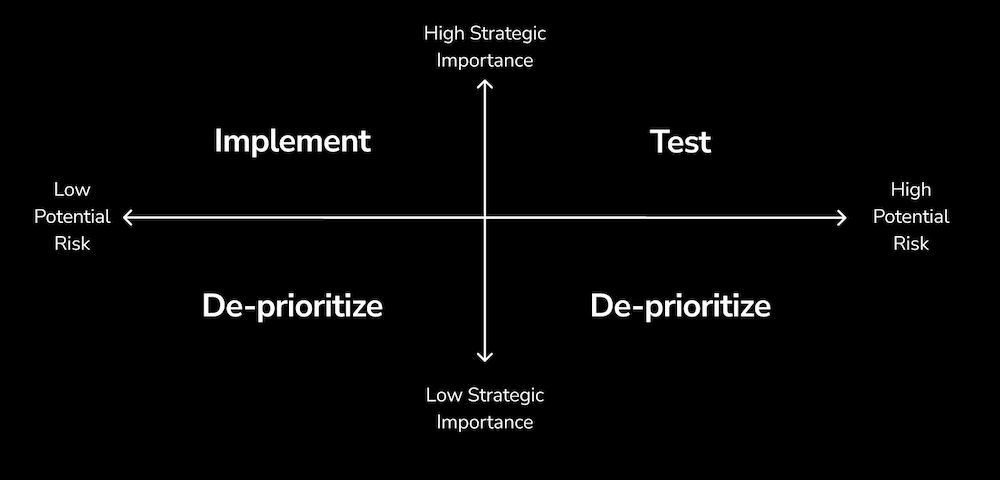

The path to better validation starts with two fundamental questions about any proposed design change:

- Is this strategically important? Does this change significantly impact key business metrics or user experience?

- What’s the potential risk? What happens if this change performs worse than expected?

Using these dimensions, you can map any design change into one of four validation approaches:

High Strategic Importance + Low Risk = Just ship it

If you can’t explain meaningful downsides to a design change but know it’s strategically important, you probably don’t need to validate it at all. These are your quick wins.

Examples for SaaS teams:

- Adding customer testimonials to your pricing page

- Improving mobile responsiveness

- Fixing broken links or outdated screenshots

- Adding clearer error messages in your product

Why this works: The upside is clear, the downside is minimal, and the time spent testing could be better invested elsewhere.

Low Strategic Importance = Deprioritize

Not every design change needs validation because not every change is worth making. Some modifications simply aren’t worth the time and resources, regardless of the validation method you might use.

Examples of low-impact changes:

- Minor color adjustments to non-critical elements

- Changing footer layouts

- Tweaking secondary page designs that get little traffic

- Adjusting spacing that doesn’t affect usability

When to reconsider: If data later shows these areas are creating friction, they can move up in priority.

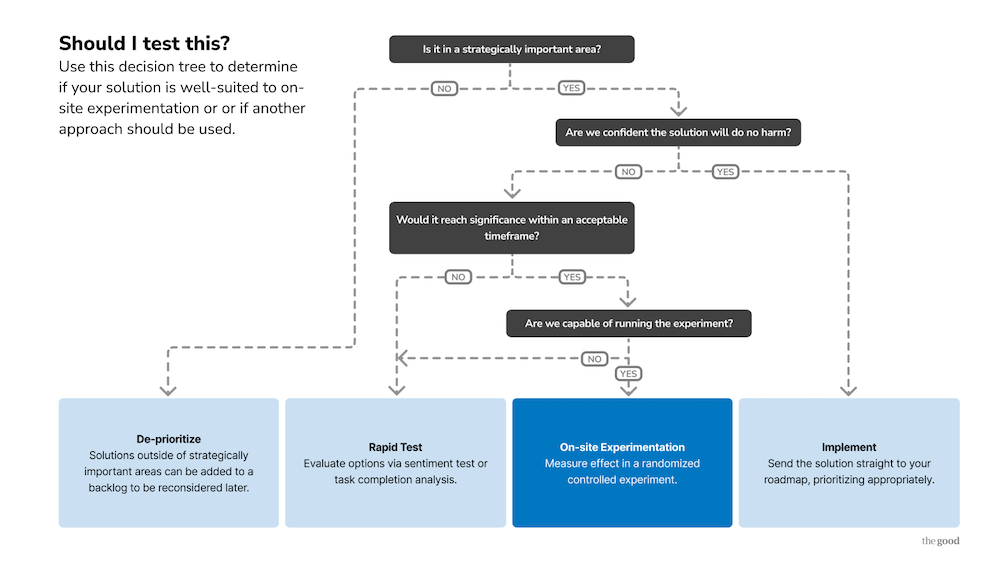

High Strategic Importance + High Risk = Validation territory

This is where both A/B testing and rapid testing methods become valuable. The critical next decision becomes: can you reach statistical significance within an acceptable timeframe, and are you technically capable of running the experiment?

When to use A/B testing vs rapid testing

When to use A/B testing for design changes

A/B testing remains your best option for design changes when:

- You have sufficient traffic on the experience: Generally, you need 1,000+ visitors per week to the page being tested

- The change is reversible: You can easily switch back if the results are negative

- You need statistical confidence: Stakes are high enough to justify the time investment

- Technical capability exists: Your team can implement and track the test properly

Examples of SaaS use cases for A/B testing:

- Complete homepage redesigns

- Pricing page layouts and messaging

- Sign-up flow modifications

- Core product onboarding changes

- High-traffic landing page variations

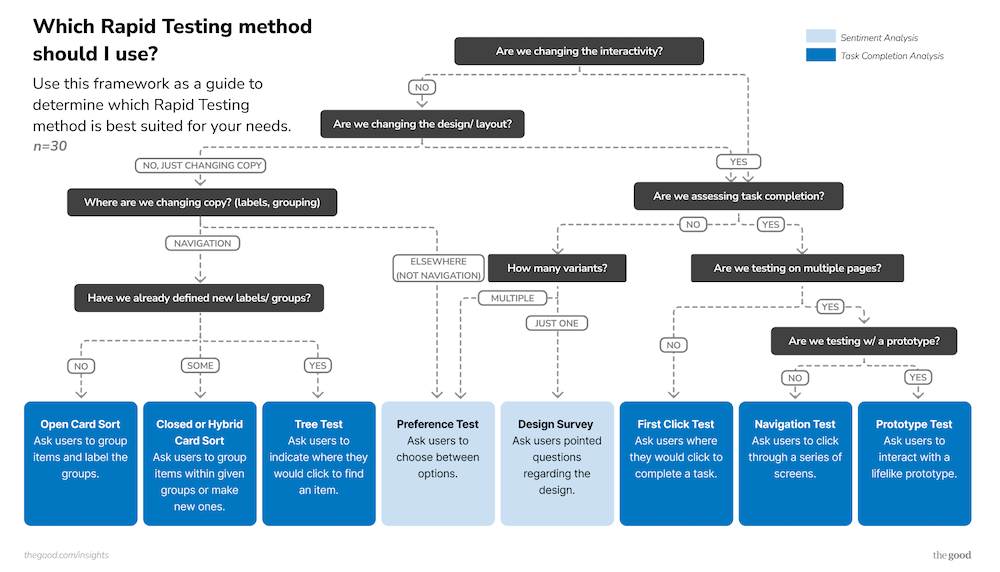

When to use rapid testing for design changes

When A/B testing isn’t right due to traffic constraints, technical limitations, or time pressures, rapid testing provides a faster path to validation.

Rapid testing methods work particularly well for SaaS design validation because they can:

- Validate concepts before development: Test wireframes and mockups before building

- Narrow down options: Compare multiple design variations quickly

- Identify usability issues: Spot problems before they reach real users

- Provide qualitative insights: Understand the “why” behind user preferences

Examples of SaaS use cases for rapid testing:

- New feature naming and messaging

- Dashboard navigation restructuring

- Enterprise sales page designs (low traffic)

- Value proposition clarity testing

- Multi-option comparisons (6-8 variations)

The natural next question might be “which rapid testing method should I use?” Here is another decision tree framework to help answer that.

Incorporate your experimentation strategy into your design process

With a decision-making strategy for how and what to test, you’ll need to incorporate the strategy into your design process. The most successful SaaS teams don’t treat validation as an afterthought. They build it into their process from the beginning:

- During ideation: Use rapid testing to validate concepts and narrow options before detailed design work

- During design: Test wireframes and mockups to identify issues before development

- Before launch: Use A/B testing for high-stakes changes, rapid testing for others

- After launch: Continue testing iterations based on user feedback and performance data

The compounding benefits of a sound experimentation strategy

The goal isn’t to replace A/B testing with rapid methods or vice versa. Both have their place in a mature experimentation strategy. The key is understanding when each approach provides the most value for your specific situation and constraints.

Teams that master this balanced approach to validation see remarkable improvement, including:

- 50% better A/B test win rates (because rapid testing helps identify winning concepts)

- Faster time-to-market for design improvements

- More confident decision-making across the organization

- Better team morale from seeing results from their work more quickly

Perhaps most importantly, they avoid the extremes of either testing nothing (high risk) or testing everything (slow progress).

For SaaS teams serious about optimization, the question isn’t whether to validate design changes; it’s whether you’re using the right validation method for each decision.

Start by auditing your current design change process. Are you testing changes that should be implemented immediately? Are you implementing changes that should be tested? By aligning your validation approach with the strategic importance and risk level of each change, you can move faster without sacrificing confidence in your decisions.

And if you aren’t sure how to get started, our team can help.

Enjoying this article?

Subscribe to our newsletter, Good Question, to get insights like this sent straight to your inbox.

About the Author

Natalie Thomas

Natalie Thomas is the Director of Digital Experience & UX Strategy at The Good. She works alongside ecommerce and product marketing leaders every day to produce sustainable, long term growth strategies.