How to Use the Double Diamond UX Research Framework to Solve Real Problems

We are sharing our interpretation of this crucial ux research framework for teams that need answers, not just data.

When teams reach out to The Good, they’re usually facing a specific challenge: conversion rates have plateaued, a new feature isn’t getting adopted, or they’re not sure which direction to take with a redesign.

They don’t need research for research’s sake. They need a clear path from “we have a problem” to “here’s what to do about it.”

Research should systematically move you from understanding problems to implementing solutions. No guesswork. No dead ends. Just a repeatable process that gets you actionable answers.

That’s what a double diamond UX research framework does.

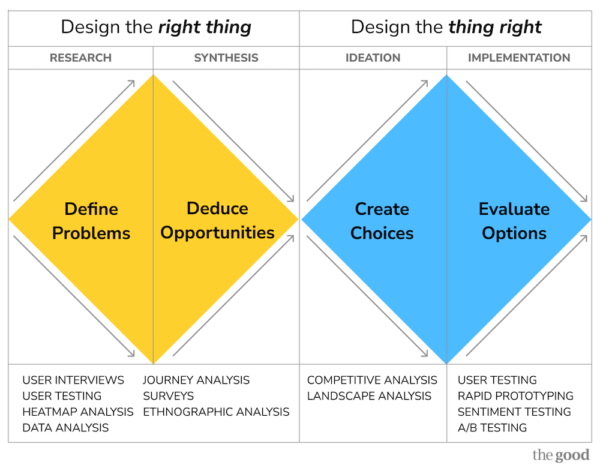

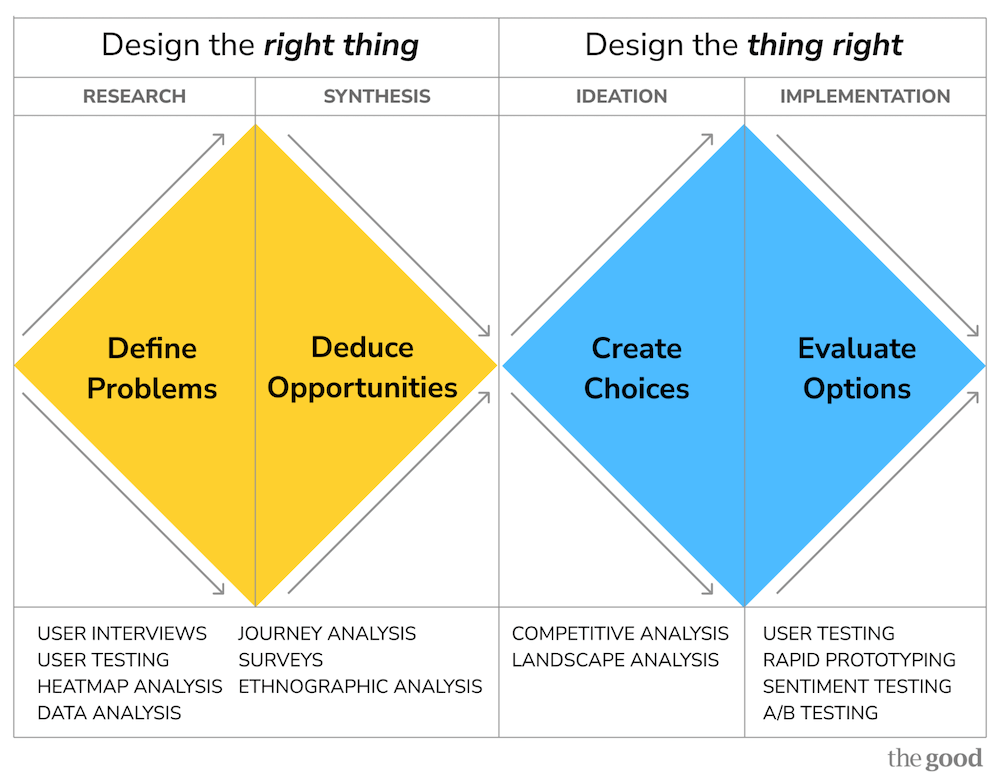

The double diamond UX research framework: design the right thing, then design the thing right

We wanted to share more about the double diamond UX research framework after seeing too many teams jump straight from problem to solution, without doing the work of understanding whether they’re solving the right problem in the first place.

The framework works because it forces teams to slow down in the right places and speed up in others. The framework is named for its diamond shape that emphasizes diverging to explore, then converging to decide.

You do this twice. First to ensure you’re solving the right problem, and then again to ensure you’re solving it the right way.

The first diamond is to design the right thing:

Expand your understanding of the problem space, then narrow it to the most important opportunities.

- Define problems (research)

- Deduce opportunities (synthesis)

The second diamond is to design the thing right:

Explore multiple solution approaches, then validate which one actually works.

- Create choices (ideation)

- Evaluate options (implementation)

Each phase has a specific purpose, uses different research methods, and answers different questions.

When to use this framework

This UX research framework works best when:

- You’re stuck. Something’s not working, and you’re not sure why. The framework helps you diagnose root causes instead of treating symptoms.

- You have options. You’ve got a few directions you could pursue, but need validation before committing resources.

- Stakes are high. You’re making changes to core experiences, entering new markets, or redesigning critical flows where getting it wrong is expensive.

- You want speed without guesswork. You need answers quickly but can’t afford to be wrong.

- You need team alignment. Different stakeholders have different opinions, and you need objective data to move forward.

How it works phase by phase

But of course, this framework is only useful if you know how to apply it! Here’s a breakdown of each phase: when you need it, which research methods to use, and real examples of how we’ve used it to solve specific problems.

Phases 1-2: Design the right thing (generative research)

The first diamond uses the same research toolkit for both defining problems and deducing opportunities. What changes is how you apply these methods:

Phase 1 (Define Problems): You’re gathering data, uncovering what’s broken, and understanding the full scope of user pain points.

Phase 2 (Deduce Opportunities): You’re synthesizing that data, identifying patterns, and prioritizing which problems matter most.

Research toolkit:

- User interviews: Understand motivations, mental models, and pain points

- User testing: Watch real users interact with your product to identify friction

- Heatmap analysis: See where users actually click, scroll, and spend time

- Data analysis: Identify patterns in behavior and correlations with outcomes

- Journey analysis: Map complete user experiences to find friction points

- Surveys: Gather quantitative data on behaviors and preferences

- Ethnographic analysis: Observe users in their natural context to understand real-world behavior

Phase 1: Define problems through generative research

Before you can solve anything, you need to understand what’s actually broken. This is where generative research comes in. This research is designed to uncover opportunities and pain points you might not even know exist.

When you need this phase:

- You know something’s not working, but can’t pinpoint what

- You’re entering a new market or launching a new product

- Conversion rates have plateaued, and you’re not sure why

- You’re getting conflicting feedback from different user segments

Case study:

The problem: A SaaS productivity platform noticed that users who adopted their collaboration feature had 40% better retention, but only 12% of new users ever tried it. The product team had already attempted several fixes, including in-app tooltips, email campaigns, and adding the feature to the main navigation, but none moved the adoption needle. They knew they had a problem, but couldn’t pinpoint the root cause.

What we did: We ran user testing with 20 recent sign-ups, watching them complete typical workflows while thinking aloud. We analyzed heatmap data to see where users looked during key moments. We conducted interviews with 12 users (6 who adopted the feature, 6 who didn’t) to understand their mental models and pain points. Finally, we analyzed behavioral data across 50,000 user sessions to identify patterns in how people discovered features.

What we found: Most users saw the feature prompts, so the issue wasn’t awareness. The problem was timing and relevance. Users ignored prompts for the collaboration feature when they appeared during solo work. But when users received documents with stakeholder comments, they actively looked for ways to coordinate feedback. The feature solved a real problem, but only at specific moments. Showing it at the wrong time made it feel like noise.

Phase 2: Deduce opportunities through synthesis

Raw research data doesn’t solve problems, but synthesis does. This phase is about taking everything you learned and distilling it into clear, prioritized opportunities.

When you need this:

- You have research findings, but need to prioritize

- Stakeholders are divided on which direction to pursue

- You need to build a business case for investment

- You’re trying to align multiple teams around a shared strategy

Case study:

What we did: We took the same data from Phase 1 but shifted our analysis to look for patterns and opportunities. We mapped user journeys to identify the specific trigger moments when collaboration pain became acute. We segmented users by workflow type to understand who encountered collaboration needs most frequently. We analyzed the correlation between feature discovery timing and adoption rates.

The opportunity: Instead of promoting the feature broadly or adding more persistent navigation, we identified a single high-value trigger: show the collaboration feature contextually when users open documents containing comments or tracked changes. This represented the precise moment when users experienced the pain this feature solved.

The impact potential: Based on session data, 43% of new users encountered stakeholder-reviewed documents within their first week. If we could convert just 50% of those moments into feature trials, we’d nearly triple overall adoption.

What happened next: We had a clear, data-backed opportunity. Now we needed to figure out how to present this feature at that contextual moment. That’s where Phase 3 came in.

Phase 3: Create choices through competitive analysis

Once you know what problems to solve, you need to explore how to solve them. This phase is about generating multiple possible solutions and understanding how others have approached similar challenges.

Research toolkit:

- Competitive analysis: Study how industry leaders solve similar problems

- Landscape analysis: Identify patterns across different approaches

When you need this:

- You need to narrow down design directions

- Stakeholders want to see options before committing

- You’re redesigning an existing feature and need alternatives

- You’re unsure which approach will resonate with users

Case study:

The problem: We knew when to show the collaboration feature, but we didn’t know how. Should it be a modal that interrupts the workflow? A subtle banner? A tutorial overlay? Each approach had trade-offs, and stakeholders had different opinions about what would work.

What we did: We conducted a competitive analysis of 12 productivity and collaboration tools, studying how they introduced features at contextual moments. We analyzed landscape patterns across project management, document editing, and communication platforms to understand what users had learned to expect.

What we found: The highest-performing patterns shared three characteristics:

- They acknowledged the user’s current task (“We see you’re reviewing comments”)

- They offered immediate value related to that task (“Collect all feedback in one place”)

- They provided a low-friction way to try (“Add your first comment now”)

The choices we created: Based on these patterns, we designed three variations:

- Inline prompt: A compact banner within the document that appeared next to existing comments

- Modal introduction: A full-screen overlay explaining the feature with a demo

- Progressive disclosure: A subtle sidebar element that expanded when users hovered near comments

Where this led: We had three viable approaches grounded in what worked for others. In Phase 4, we tested which resonated most with actual users.

Phase 4: Evaluate options

Before you ship, you need to validate. This final phase uses evaluative research methods to test solutions quickly and make confident decisions.

Research toolkit:

- User testing: Validate whether users can actually complete tasks

- Prototype testing: Test concepts before full development

- Rapid testing: Get fast feedback on specific design decisions (preference tests, first-click tests, tree tests, design surveys, card sorts)

- Sentiment testing: Measure emotional response to different approaches

- A/B testing: Compare variations with real users in production

When you need this:

- You have a few strong directions and need to pick one

- You want to validate before investing in full development

- You need fast feedback to maintain momentum

- You’re making changes to high-traffic, high-value experiences

Case study:

The problem: All three approaches looked good on paper, but we needed to know which one users would actually respond to. We didn’t have time to build and A/B test all three variations in production. We needed a faster way to validate the direction before investing in full development.

What we did: We created rapid prototypes of all three approaches and ran user testing with 24 people who matched our target audience. We showed each user a realistic scenario: opening a document with stakeholder comments. Then we measured task completion (could they figure out how to use the collaboration feature?), time to first action (how quickly did they engage?), and sentiment (how did they feel about the interruption?).

What we found: The inline prompt won on every metric, and users described the inline prompt as “helpful” and “right when I needed it,” while the modal felt “intrusive” and progressive disclosure was “too easy to miss.”

The decision: The team built and shipped the inline prompt, and feature adoption increased.

Why this worked: By following all four phases of the double diamond ux research framework, we didn’t just guess at a solution. We systematically moved from “we have a problem” (low adoption) to “here’s the root cause” (timing and relevance) to “here’s how others solve this” (contextual introduction patterns) to “here’s proof this will work” (validated direction through testing). Each phase built on the last, reducing risk at every step.

Why this UX research framework works

The double diamond framework prevents the most common research mistakes:

It prevents solution jumping

Teams often skip straight from “we have a problem” to “here’s what we’ll build.” Our framework forces you to first understand the problem deeply (Phase 1), then synthesize opportunities (Phase 2) before exploring solutions (Phase 3) and validating them (Phase 4).

It matches research methods to questions

Generative research (Phases 1-2) answers “what problems exist?” Evaluative research (Phases 3-4) answers “does this solution work?” Using the wrong method for your question wastes time and money.

It creates natural checkpoints

Each phase ends with a clear deliverable: defined problems, prioritized opportunities, multiple solutions, or validated direction. Stakeholders can weigh in at the right moments without derailing progress.

It scales to any timeline

Need an answer this week? Run a rapid test. Have a month? Go deeper with interviews and competitive analysis. Have a quarter? Complete the full cycle. The framework adapts to your constraints while maintaining rigor.

How you can adapt the framework to increase revenue from your digital experience

While it would be ideal to run the full framework on all product decisions, we don’t always have the luxury of time. Here’s how we typically adapt the framework depending on project needs.

Fast decision (1-2 weeks): Skip straight to Phase 4 with rapid testing when you have clear options and need directional data quickly.

Optimization project (2-4 weeks): Focus on Phases 1-2 (define problems, deduce opportunities) when you need to diagnose what’s broken and prioritize fixes.

Strategic initiative (4-8 weeks): Full cycle through all four phases when you’re entering new territory or making high-stakes changes.

Ongoing partnership: Rotate through phases as needs evolve. We may use competitive analysis this month, user testing next month, and rapid testing the month after.

The key is matching research depth to risk and constraints.

You’re not trying to run the most comprehensive study possible. Instead, you’re trying to give yourself the confidence to make the right decision with the time and budget you actually have.

Ready to solve your research challenge?

The double diamond framework gives you a proven way to move from problem to solution. Whether you need a quick rapid test to validate a design decision or a comprehensive research engagement to inform strategy, we adapt this framework to your specific needs.

The teams we work with don’t need more data; they need clarity. They need to know what’s broken, why it matters, and what to do about it. That’s exactly what this UX research framework delivers.

And when you work with The Good, here’s what research deliverables look like:

- Problem definition: Clear documentation of user pain points, friction in the experience, and opportunities ranked by impact and effort.

- Competitive analysis: Side-by-side comparison of how others solve similar problems, with specific recommendations for what to adopt, adapt, or avoid.

- User testing results: Video clips of real users, annotated with insights, organized by finding severity, and accompanied by specific design recommendations.

- Rapid test reports: Statistical analysis of user preferences with clear winners, qualitative feedback explaining why users chose what they did, and next-step recommendations.

- Strategic recommendations: Prioritized roadmap of what to build, test, or optimize based on research findings and business goals.

Everything is actionable. We don’t just tell you what we found, we tell you what to do about it.

Want to see how this framework would apply to your specific challenge? Let’s talk about your research needs.

About the Author

Natalie Thomas

Natalie Thomas is the Director of Digital Experience & UX Strategy at The Good. She works alongside ecommerce and product marketing leaders every day to produce sustainable, long term growth strategies.