What is CRO? Conversion Rate Optimization Explained

You’re probably already spending money to drive traffic to your website. Paid ads. SEO. Social. Email. The question isn’t whether people are showing up, it’s whether they’re doing anything when they get there.

That’s where conversion rate optimization comes in.

Conversion rate optimization (CRO) is the process of increasing the percentage of visitors who take a desired action on your website or app. That action could be making a purchase, starting a free trial, signing up for a demo, or completing a registration form. Rather than spending more to acquire new visitors, CRO gets more value out of the traffic you already have.

The math is straightforward. If your site converts 2% of 10,000 monthly visitors, you get 200 customers. Improve that rate to 4% with the same traffic and same ad spend, you’ve doubled revenue without spending another dollar on acquisition.

But CRO isn’t just button colors and headline tests. Done well, it’s a research-backed discipline that uncovers why people aren’t converting and fixes the root cause, not just the symptom. And when it’s done really well, it evolves into something bigger. More on that below.

CRO by the numbers: What the benchmarks tell you

Before you optimize, you need to know what you’re optimizing against. Here are five benchmarks worth knowing:

1. The average ecommerce conversion rate sits around 1.8–2%.

According to IRP Commerce data, the average ecommerce conversion rate in 2025 is approximately 1.8%. Shopify puts a similar figure at 2.5–3% for stores on their platform, with the top 20% converting above 3.2%. If you’re below 2%, you almost certainly have fixable problems.

2. B2B SaaS conversion rates are even lower, around 1.1–1.2%.

Software and B2B companies consistently land at the bottom of industry benchmarks (Firstpagesage, 2025). Longer decision cycles and complex buying committees drive this down. Every friction point in your funnel costs you more than it would for a $30 consumer product.

3. Seven out of every ten online shoppers abandon their cart.

Baymard Institute’s aggregate of 50 cart abandonment studies puts the global average at 70.22%. The leading causes: unexpected costs at checkout (48%), mandatory account creation (26%), and overly complicated checkout flows (18%). Baymard also estimates that better checkout design alone could recover $260 billion in lost orders annually in the US and EU.

4. Companies that invest in CRO see an average ROI of 223%.

Despite this return, research shows companies spend only $1 on conversion optimization for every $92 they spend acquiring new visitors (Econsultancy, via DemandSage). The asymmetry is remarkable. Fewer than 40% of businesses have a formally documented CRO strategy — which means there’s significant competitive advantage available to those who do.

5. Top-performing landing pages convert at 5–12% or higher.

The industry average for landing page conversion sits around 2.35%, but the top 25% of websites convert at 5.31% or more, and the top 10% exceed 11%, according to DemandSage’s CRO statistics. There’s a wide gap between average and excellent, and that gap is almost always a CRO problem.

How CRO works: A 7-step process

CRO is a process, not a one-time project. Here’s how it works when it’s done right:

Step 1: Define your goals and conversion events

You can’t optimize what you haven’t defined. Before anything else, identify which actions matter most: purchases, sign-ups, demo requests, free trial activations, and set up tracking to measure them accurately. Fuzzy goals produce fuzzy results.

Step 2: Audit your baseline data

Pull your current conversion rates, bounce rates, exit pages, and funnel drop-off points. This gives you a map of where people are falling out before taking action. Google Analytics, heatmapping tools, and session recordings all serve a role here.

Step 3: Conduct user research

This is the step most CRO programs skip, and it’s usually the most important. Analytics tells you where people drop off. Research tells you why. Qualitative methods like user testing, surveys, and interviews surface friction points, confusion, and unmet needs that no dashboard will ever show you.

Step 4: Form hypotheses

Based on what your research uncovers, write specific, testable hypotheses. Not “let’s change the headline” but “changing the headline to focus on the outcome rather than the feature will increase click-through because users currently don’t understand what they’re getting.” The more specific the hypothesis, the more useful the result, win or lose.

Step 5: Design and prioritize experiments

Not all tests are worth running. Prioritize based on potential impact, confidence in your hypothesis, and ease of implementation. This is where structured prioritization frameworks help teams decide where to spend their testing bandwidth instead of chasing whoever made the loudest request in the last meeting.

Step 6: Run A/B tests (and other experiments)

With your hypotheses and designs in hand, launch your experiments. Give them enough time and traffic to reach statistical significance. Ending tests early because the results look promising is one of the most common mistakes in CRO. Patience and rigor protect the quality of your decisions.

Step 7: Analyze, implement, and iterate

A winning test is only valuable if you act on it and learn from it. Document what worked, what didn’t, and why. Roll out winners. Use losing tests as research inputs for the next round. The best CRO programs are continuous. Every test makes the next one better.

What CRO is not

CRO has accumulated a lot of mythology. A few things worth clearing up:

CRO is not just A/B testing. In practice, many teams use “CRO program” and “testing program” interchangeably. That’s the problem. Testing is one tool in the toolkit. A CRO program without research is just guessing with a spreadsheet.

CRO is not tricks. Dark patterns and manipulative tactics can technically lift conversion rates short term. They also destroy trust and increase churn. Sustainable CRO removes friction and builds confidence; it doesn’t manufacture false urgency.

CRO is not a one-time engagement. Running a few tests and calling it done is a project. Real CRO is a continuous capability that compounds over time. Each experiment builds institutional knowledge that makes future optimization faster and more effective.

CRO is not a silver bullet for broken acquisition. If the wrong people are arriving at your site, optimizing the experience will only do so much. CRO works best when you have defined audiences, solid traffic, and a product people actually want.

CRO vs. Digital Experience Optimization (DXO): What’s the difference?

Conversion rate is a useful metric. It’s also a product of hundreds of factors, many of which are outside your direct control, like market conditions, competition, seasonality, and traffic quality. Treating conversion rate as the single north star can lead teams to optimize for the number rather than the experience generating it.

Jon MacDonald, founder of The Good, put it plainly: “Websites and apps won’t reach their full potential under the current industry expectations and implementations of conversion rate optimization.”

The issue isn’t that conversion rates don’t matter. It’s that how you improve them can’t be reduced to one research method or one metric. One number can’t signal the health of your entire digital product.

Digital Experience Optimization (DXO) is the evolved approach. Where CRO focuses on increasing a specific conversion event, DXO looks at the entire digital journey, from the moment a user enters your site or app through post-conversion, to find and fix what’s actually preventing growth.

DXO practitioners are excellent at A/B testing, but they also:

- Pull back from conversion metrics to understand and improve the whole digital journey

- Incorporate qualitative and behavioral research to supplement what A/B testing can reveal

- Leverage expertise across UX, strategy, data, and design to drive sustainable improvement

- Build a culture of data-informed, user-centered decision-making inside your organization

Think of CRO as optimizing a specific page or funnel step. DXO optimizes the entire experience and does it with the research rigor needed to make decisions you can be confident in.

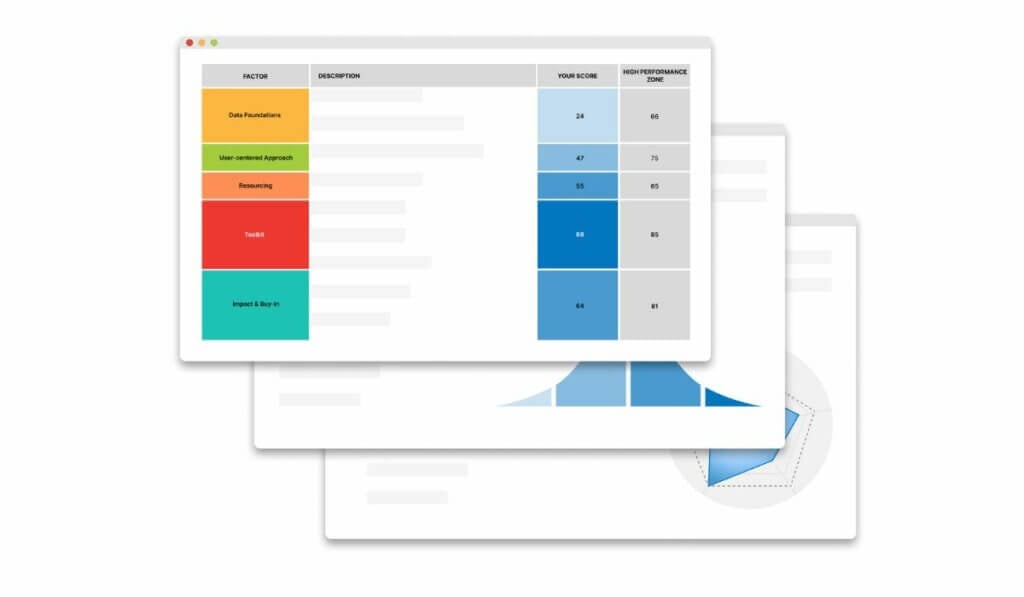

How The Good measures DXO: The 5-Factors Scorecard™

Measuring a digital experience is admittedly harder than watching a single conversion metric tick up or down. That’s why we built the 5-Factors Scorecard™, a proprietary framework based on a study of hundreds of digital leaders’ optimization challenges, to identify the five factors the highest-performing companies have in common.

1. Data Foundations: Goals, ownership, and data quality form the backbone of any optimization effort. If your data can’t be trusted, your decisions can’t be either.

2. User-Centered Approach: A comprehensive research roadmap and a high-context understanding of customer behavior. Decisions driven by what users actually do, not what teams assume they do.

3. Resourcing: The right resources in place to support adequate capabilities and pace. Optimization programs that are under-resourced stall; this factor surfaces where gaps exist.

4. Toolkit: A healthy variety of tools for planning, measurement, and testing protocols. Not one tool used for everything, but the right tool for each job.

5. Impact & Buy-In: Practices and outputs that increase organizational relevance and perceived efficacy of optimization efforts. The best program in the world doesn’t move the needle if no one acts on it.

Research shows that improvement across these five factors leads to measurable business outcomes and gives teams a far more actionable picture of where to focus than conversion rate alone.

What optimization looks like in practice

The difference between an optimization program that plateaus and one that compounds usually comes down to whether research is leading the work.

Teams that rely on best-practice templates and gut-feel tests tend to see early wins followed by diminishing returns. They run the obvious tests, exhaust the low-hanging fruit, and then wonder why nothing is moving anymore. Teams that invest in genuinely understanding their users (what they’re trying to do, where they’re getting confused, what’s making them hesitate) keep finding new things to improve because they’re actually learning.

Here’s what that looks like across a few different contexts:

A media company rebuilding for digital subscribers. The Atlanta Journal-Constitution came in with 24,000 digital subscribers and a conversion problem they couldn’t solve with dashboards alone. By redesigning the experience around actual user behavior and intent, they grew to over 101,000 digital subscribers. The work wasn’t about finding a better CTA color. It was about understanding what readers needed to feel confident committing to a subscription.

A global software company moving the needle on engagement. Adobe’s product marketing team brought in The Good when they needed a fresh perspective on customer engagement. As Aditya Lakshminarayan, Product Marketing Manager at Adobe, put it: their recommendations helped move the needle on customer engagement and drive product growth not through guesswork, but through research expertise and insight they couldn’t generate internally.

A creator-education business connecting optimization to the bottom line. Marie Forleo, founder of B-School and MarieTV, described The Good’s approach as investigative analytics she’d never seen before, the ability to dive in, research, analyze, and translate findings into action that gets real results for the business and bottom line.

The pattern across all of these is the same: research first, testing second, results that hold.

The Good has 17+ years of optimization experience working with companies including Adobe, The Economist, and Xerox. Our work spans ecommerce and SaaS, and our methodology is built on one consistent principle: research before testing.

Our flagship Digital Experience Optimization Program™ starts with a full-funnel analysis of your digital experience, including things like heatmap analysis, session recordings, and usability testing, to diagnose your specific challenges and prescribe a solution. We identify the biggest barriers and opportunities thematically, then build a custom program that includes everything you need (and nothing you don’t) to create an engaging experience and a better digital product.

Frequently asked questions about CRO

What is a good conversion rate?

It depends on your industry, traffic source, and the action you’re measuring. For ecommerce, 2–4% is a reasonable general benchmark. For SaaS, you might be optimizing trial-to-paid rate, feature adoption, or time-to-value rather than a single purchase transaction. The most useful benchmark is your own historical performance. Consistently improving your baseline is more meaningful than hitting an industry average.

How long does CRO take to see results?

Simple UX fixes and checkout improvements can show results within weeks. A structured A/B testing program typically needs 2–4 weeks per test to reach statistical significance at moderate traffic volumes. Compound results from a continuous program build over 3–6+ months.

Does CRO work for B2B and SaaS companies?

Yes, and it’s often more impactful than in ecommerce because the value per conversion is much higher. For SaaS, CRO typically focuses on registration flow, onboarding experience, trial-to-paid conversion, and reducing early churn rather than a single purchase event.

What’s the difference between CRO and UX?

UX is the broader discipline of designing products that are useful, usable, and satisfying. CRO is the practice of improving the rate at which users complete specific actions. They’re deeply connected; the best CRO is grounded in UX research, but CRO is more metrics-focused and experiment-driven.

What tools do you need for CRO?

At minimum: an analytics platform (Google Analytics 4 or equivalent), a testing platform (Convert, VWO, or similar), and qualitative research tools (session recordings, user testing, surveys). The tools matter far less than having a structured process and the research discipline to inform what you test.

When should a company move from CRO to DXO?

When you’ve hit the ceiling of what single-metric optimization can deliver. When your testing program produces incremental wins but can’t explain why users behave the way they do. When you need buy-in from stakeholders and a framework that connects optimization work to broader business goals. That’s when a full DXO approach pays for itself.

Ready to get started?

The web and the way users experience platforms, stores, and media have changed. If you want to keep up, your approach has to evolve with it.

Whether you’re just getting started with CRO or you’re ready to move beyond it, The Good can help. Our Digital Experience Optimization Program™ is purpose-built for SaaS companies that have found product-market fit and are ready to scale. We bring the research rigor, strategic framework, and testing expertise to turn optimization into a sustainable growth engine.